How Manna Used A/B Testing To Increase Add-To-Cart Clicks

About Manna Natural Cosmetics

Manna is a Hungary-based online store that sells chemical-free, organic, and handmade personal-care products like soaps, body butters, and essential oils. Its products are available for purchase in Hungary, Germany, Austria, and Serbia.

Goals

As a Hungarian company, the brand faced customer trust issues in markets such as in Germany. Their main goal was to improve the number of clicks on Add to Cart CTA button by developing increased customer confidence. They aimed to do this by adding third-party product test certifications and internationally-recognized payment icons through A/B testing.

Tests run

The company wanted its website to develop increased customer confidence by adding third-party product test certifications and internationally-recognized payment icons.

This is what control looked like:

The team at Manna hypothesized that adding the product quality certification logos and payment icons near the Add to Cart CTA button on the product page would increase clicks on the button and hence sales. The team decided to A/B test the original (control) against two variations.

The first version had a big banner showing the payment icons in one row and 3 product test certification logos just below. This is what variation 1 looked like:

The banner in the second version showed 2 rows of various product test certificates; there were no payment icons. This is what variation 2 looked like:

Using the VWO platform, the test was run on more than 3,000 visitors, split equally between the 3 versions. The goal tracked was the number of clicks on Add to Cart CTA button.

Conclusion

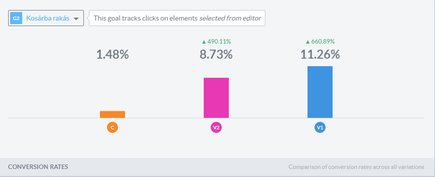

Variation 1 beat both the original (control) and Variation 2. 11.26% of the visitors who viewed this version, clicked the Add to Cart button. The figures for variation 2 and the control were respectively 8.73% and 1.48%. Here’s a screenshot of the VWO app-generated bar graph that shows conversion rates for the control and the 2 variations.

As always, it is instructive to go behind the data to understand what caused the evident changes in customer behavior.

Given its age and provenance, the Manna brand could not rely only on product quality and great product pages on its website to sell. It needed to reiterate product authenticity, something that both variations helped do. That this was important to buyers was borne out by the fact that variation 2 recorded a 490% increase in the click rate.

But buyers also needed to be reassured that they could purchase the product by using payment options they were comfortable with and were well-known for their data protection and privacy practices. Variation 1 offered payment safety assurance along with product authenticity/quality assurance. Not surprisingly, this variation performed the best.

Location

Hungary

Industry

Retail

Impact

490% increase in Click-through rate