If you make any methodical mistakes while running an experiment in VWO, a warning gets added to your test report. These warnings inform you of errors you made by taking actions such as changing variation content, goal setting, etc. after starting your A/B test.

With this update, we are changing where these warnings are shown and have also added a detailed explanation about the type of errors that can be introduced in the A/B test, and what is the recommended method to avoid them. With each error type, you see the list of actions that caused that warning, which user made this change, and when.

One such error is Simpson’s paradox which can be introduced in a test if you make changes to traffic split between variations after starting the test. Say you start a test with 2 variations with a 90%-10% traffic split because you want to ensure that the new version doesn’t break and after a few days, you change the traffic split to 50%-50%. You might think you were being cautious of a change you made and then you set it on the right track. But actually, a lot of visitors have already become a part of the original version and they will continue to see that and have more chances to convert.

So even if the conversion rate of variation is higher than the original because the number of visitors is more in control, the effect might reverse when the data is combined. See the table below:

| Day 1 (Control: 90%, Variation:10%) | Day 2 (Control: 50%, Variation 50%) | Total | |

| Control (conversions/visitors) | 40/900 = 4.45% | 5/500 = 1% | 45/1400 = 3.21% |

| Variation (conversions/visitors) | 5/100 = 5% | 6/500 = 1.2% | 11/600 = 1.83% |

You can read more about Simpson’s paradox here and here.

The warnings also try to guard your test results against:

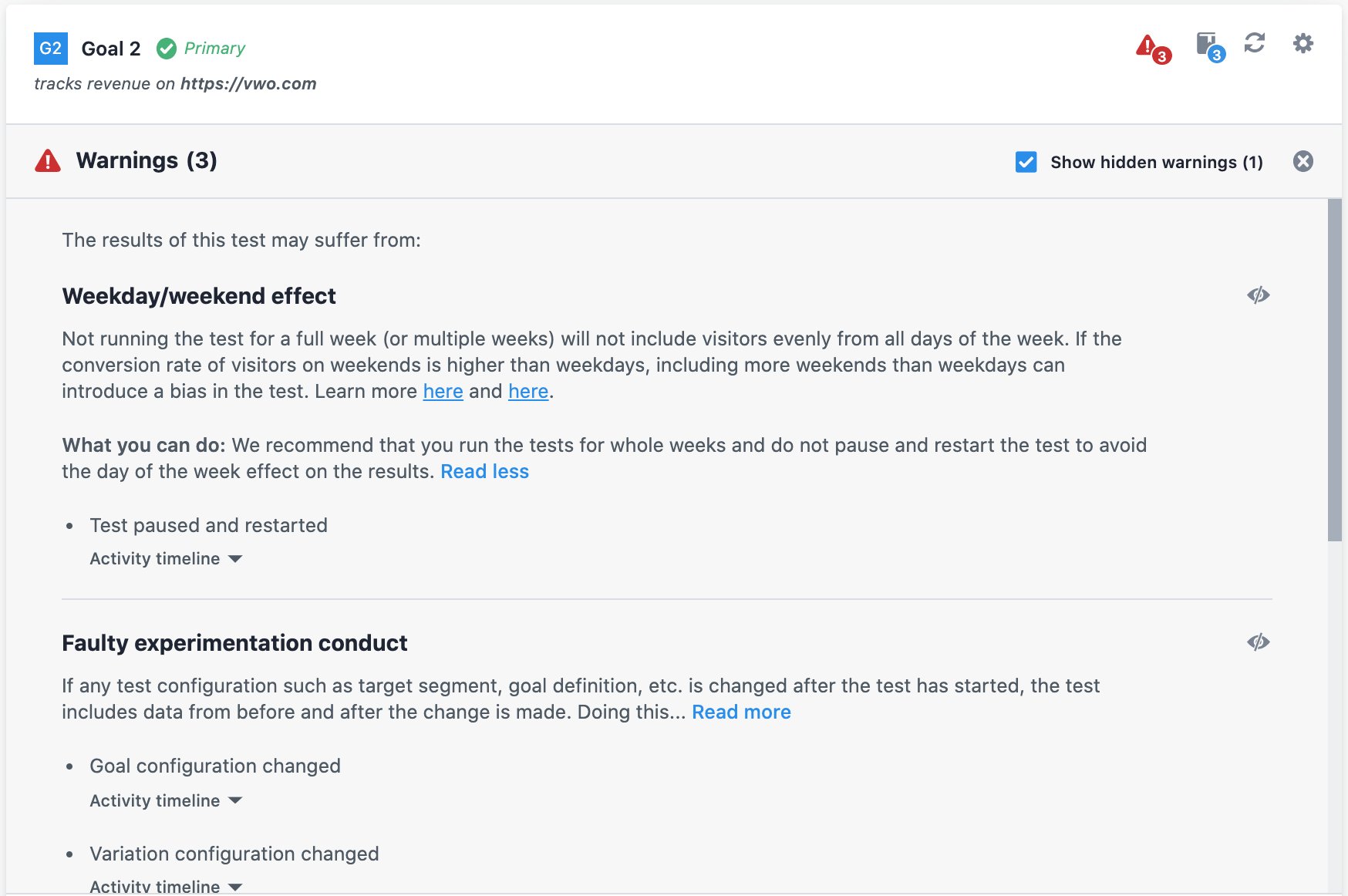

- Weekday/weekend effect: This can be introduced in a test if all days of the week are not evenly included in the test. If the conversion rate of visitors on weekends is higher than weekdays, including more weekends than weekdays can introduce a bias in the test. Read more here and here.

- Faulty experiment conduct: If you make changes to the experiment configuration after it has been made live, you risk polluting the data collected which leads to the erroneous calculation of statistical significance.

You can now also hide a warning from a test report. While warnings inform you of errors you might have made while running the test, there might be instances where such changes are unavoidable.

Suppose you created the test on a staging environment, and after verifying everything is working fine, you change the URL and Goal settings to match the production environment. This gives you a warning that you’ve changed your test settings after starting it. Since you know the warning is not applicable in this scenario, you can hide the warning.

To learn more about warnings, please refer to this knowledge base article. We’re in the process of rolling out this feature. It will soon be available in your account.