At VWO, we understand that as CRO practitioners and marketers, conversions are the holy grail for you. Over the last ten years of working with hundreds of companies, we also understand that there’s no fixed linear path from beginning to reaching conversion rates you would be proud of.

We’ve helped hundreds of optimizers across industries and geographies, and found common patterns in the mistakes they’re making. In this article, we discuss the top 10 of these mistakes that CRO practitioners must avoid.

Download Free: Conversion Rate Optimization Guide

If you’re mindful of these 10 possible roadblocks, you can run your programs more successfully, and make time to tackle other less common, specific-to-your organization problems.

1. Testing without a roadmap

In our experience, many CRO programs fail because of bad timing. For example, tests being run in the holiday seasons are likely to yield unreliable results for your SaaS business, as your target audience is vacationing. This might lead to sinking website performance instead of improving it.

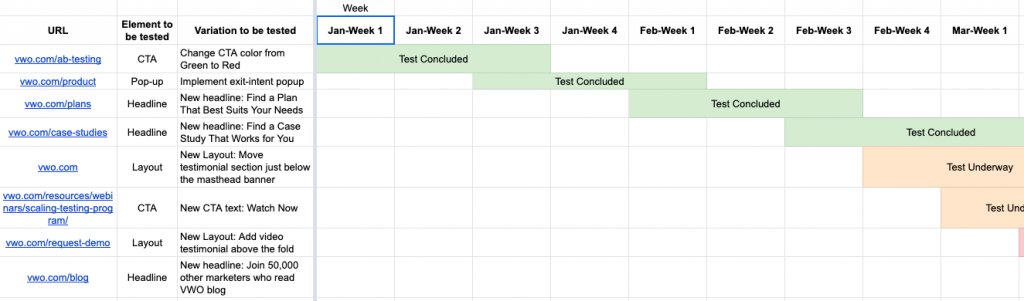

While doing your preliminary research, it is essential to set up stages for your experiments such as creating tests, QAing, launching the test, waiting for the results, etc. A CRO roadmap enables you to track the timelines and progress of your experiment at every stage, which can promise reliable results.

Therefore, it is highly recommended to create a roadmap for at least a quarter for a smooth sail.

Example of a testing roadmap with the calendar

Best practice

Creating a testing calendar for a roadmap can be helpful.

- Higher traffic means quicker results: All A/B testing tools have algorithms designed to give results when the confidence level reaches at least 95%. Tests need to capture a specific sample size and conversions to achieve the confidence level. It can be challenging to get quick results when running campaigns with low traffic on your website. These tests would take some time to reach statistical significance. You are therefore required to hang in there, patiently.

- Seasonal Testing: Seasonal testing will ensure the sampling of high-quality traffic. Below are some examples around what that means for various industries:

- eCommerce: A/B testing during holiday seasons, such as Black Friday, will bring in more traffic to the website and give you quicker results. However, it can be nightmarish for most marketers to have a failed variation that drops sales during peak sales season. Customer experience optimization platforms such as VWO have introduced Multi-Armed Bandit, which is a type of A/B testing that uses machine learning from data to dynamically increase the visitor allocation in favor of better-performing variations.

- SaaS: A/B testing during low sales cycles such as Christmas and New Year holidays will bring you slower results, assuming that most of your customers and prospects base would be vacationing.

2. Unorganized one-off testing

A/B testing is a part of CRO. However, we’ve noticed many marketers directly jumping into testing without analyzing their site’s current performance, or backing up their tests with research-based hypotheses.

According to industry experts, only 1 out of 7 A/B tests delivers a statistically significant result. However, these statistics also showed that the tests run by agencies have a better success rate of 33.3%.

There are several reasons why our partner agencies and companies with organized CRO processes perform better. Some of them are:

- They design a testing roadmap so that testing is done methodically and as per a prioritization framework.

- They create benchmarks for KPIs from current website performance using Goals and Funnels.

- They analyze the reason behind drop-offs by using tools available in VWO such as Heatmaps, Recordings, Surveys, etc.

- They create data-backed testing hypotheses.

- They run test campaigns based on these hypotheses and hence increase the chances of success.

3. Not having a clear understanding of KPIs in your CRO project

Clarity is the first thing you should seek before setting foot on any of the experiments or campaigns as a marketer. Without understanding the objective, KPIs, benchmarks, milestones, and having a roadmap in place, you would not be able to think analytically, impacting the whole campaign.

Here are some of the key KPIs that some of our successful customers have in place:

- Current Revenue and Projected Uplift

- Average Order Value (AOV)

- Customer Loyalty/Retention

- Lifetime Value (LTV)

- Return on Investment (ROI)

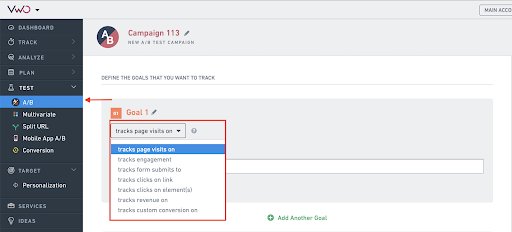

4. Not tracking micro conversions

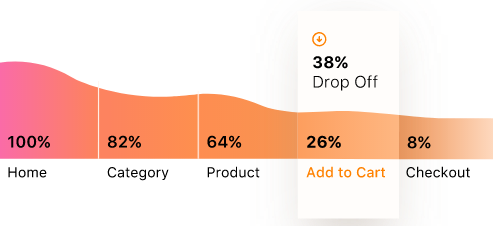

As a CRO practitioner, your business KPIs may include tracking big macro conversions such as revenue, free trial signups, request a demo/quota, purchases, bookings, checkouts etc. But do you keep track of micro conversions—the baby steps to reach the final goal?

Let’s understand what they do.

Micro conversions can be form fills, element visibility, scroll depth, clicks on CTAs, etc., that play a pivotal role in determining your website performance and user experience. They enable you to analyze customer behavior in their journey on your website.

Let’s take the example of an eCommerce website. You can track the following micro conversions while setting up your goals and funnels:

- Adding products to cart: Cart abandonment is one of the major concerns for the eCommerce industry. Although it impacts your conversion goals negatively, it indicates visitors’ interest in your products and can be utilized for retargeting. By tracking clicks on ‘Add to Cart’, you can filter shoppers who add products to their carts and abandon them thereafter and observe their behavior to optimize your cart abandonment rate.

- Viewing your product pages: Our focus mostly lies entirely on conversions and goals, and we end up ignoring the tracking of important metrics like product page views. However, tracking product pages reveals an estimated percentage of visitors who are interested in exploring your product. This data point will show the visitors’ intent to become your customers. The insights derived from this tracking exercise can guide you in generating and testing new ideas to optimize your page for the visitors who landed on your page but did not convert.

- Browsing high volume of pages: You may have site visitors who visit many pages on your website without converting. This shows that they are somewhat interested in your business, but are unable to find what they are looking for. This presents an opportunity to explore testing ideas focused on motivating these leads to become customers.

- Creating an account: The number of visitors who create an account on your website can be another micro conversion to be tracked. Although creating an account does not indicate a macro conversion (such as a purchase), this data point presents a group with high intent to become customers.

Analyze your eCommerce website for free

5. Testing with an inadequate sample size

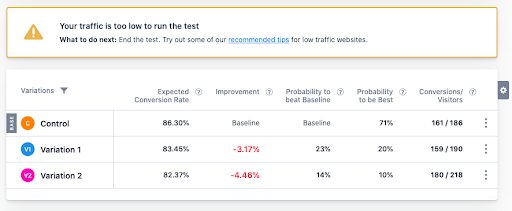

Businesses starting with CRO programs commit the mistake of running A/B tests on pages with low traffic. Testing with low traffic does not fetch you reliable results because the test does not have enough data to get a high confidence score.

Hence, you will either have to stop the test or wait for some time until it reaches its statistical significance. With low traffic, achieving statistical significance can take a very long time.

On your VWO dashboard, you will be able to see a warning if you are running your campaigns on low traffic.

Best practice

- Run tests on pages with high traffic such as pricing and free shipping.

- Let your tests run until they achieve a statistically significant result.

Download Free: Conversion Rate Optimization Guide

6. Pausing tests without waiting for results

In some cases where tests have a substantial sample size, marketers tend to pause the test before it reaches statistical significance. Below are a couple of reasons why they do so:

- They want to preserve the A/B testing tool’s visitor quota by not running tests on high-traffic pages. Therefore, they end up putting a cap on visitor quota for a running test without realizing the consequence of the invalid or delayed outcomes.

- The testing tool suggests a longer duration to declare a result than the CRO team expects. So, they tend to conclude the test without letting it reach statistical significance and pause the campaign prematurely.

What happens with such insignificant results?

The website performance starts going down if you implement changes based on an A/B test with inconclusive results. If you decide to save on testing quota, the cut-down will lead to an indirect increase in costs owing to dropping website performance. Short-term euphoria brought by positive results should be handled with calm.

Best practice

It is imperative to keep running the test until it reaches its statistical significance to declare the result with confidence. This will give you more reliable results and eventually increase your website performance.

If you are still concerned about visitor quota consumed, tools such as VWO can help with some of its capabilities to dissipate less traffic to declare a result. For example, in VWO:

- You can only sample a percentage of your traffic while running campaigns on high traffic pages.

- You can run more tests with lesser visitor quota consumption. That is, even if a user becomes part of 3-4 active test campaigns on your website, it will still be considered as one visitor quota for that month.

- The advanced bayesian statistics engine ensures that it consumes less time and quota to give you accurate results.

7. Misreading result reports

Studying and understanding the test reports can be challenging for people new to CRO. An ideal test report has at least three goals which must be closely monitored. People often tend to get excited if they see an uptick in one of the goals, ignoring other goals and metrics that might be impacting their experiments holistically. This may lead them to stop the test before it reaches its statistical significance or make decisions that derive inconclusive results.

Also, here’s how Chris Marsh added +$3M to client revenue with CRO & UX.

Best practice

Consulting with the platform’s team/representative to understand the reports for a few tests can be insightful.

Here are some of the questions you might ask while understanding the mechanism of a platform that you are using:

- How is statistical significance calculated?

- What is a winner in the report?

- What is a smart decision in the report?

- How do I interpret the report?

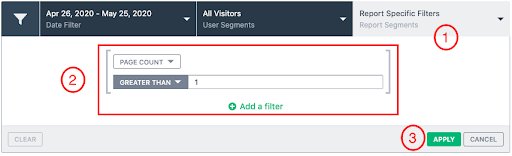

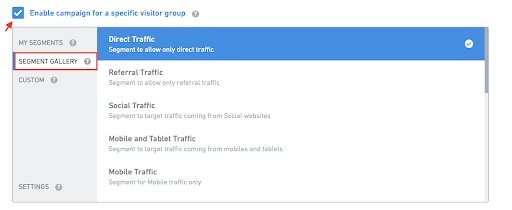

8. Viewing all traffic with the same lens

In highly energized testing environments, marketers often overlook the details of the traffic in reports, such as segmentation, and end up making decisions hastily. They take a cursory look at the report result and take the call on making the changes on the live website. They often ignore crucial details and this results in failed or inconclusive tests. This happens all the time, everywhere.

Instead of directly jumping on to the result while studying a report, you must compare results of different segments for deeper insights. Segmentation can fetch you specific trends and insights from a group of people having a peculiar behavior that can drastically vary from other groups. Therefore, to get granular and more actionable insights, you must segment reports based on demographics, geography and other dimensions. This habit, if developed, can be promising for the success of your CRO program.

For example, some segments that marketers in eCommerce space use are:

- Demographic segmentation: Gender, age, socio-economic status, birthdays, and location

- Behavioral segmentation: RFM (Recency, Frequency, Monitory), new customers, returning customers, and cart abandonment

- Source and Devices: Source URL and device

How easy is it to do this with VWO?

VWO has many segmentations available in reporting, and you also have additional options to create your own segmentation (Custom Dimensions) based on your website requirements.

9. Ignoring well-performing tests

‘Why fix something that isn’t broken!’

CRO is an exercise that aims at continuously improving your website, to keep pace with global standards.

A well-performing page does not warrant that there is no scope of further improvement. Instead, it suggests that there is room for more conversions to drive which were untapped yet.

The “Historical Optimization” project by Pamela Vaughan, Hubspot, is an inspiring one. The project involved updating and reposting old blog posts to generate more leads.

During her analysis, she found that 76% blog views came from old blog posts, and 96% of leads came from these blogs. By optimizing the old posts, she was able to increase leads by more than double. She also managed to increase the number of monthly views of old posts by an average of 106%.

Best practice

You must prioritize working on low-performing pages, however, don’t ignore your high-performing pages altogether. You might find a few ideas if you pay attention to your website’s insights. You can also run on-page surveys for user feedback to understand how you can further optimize their experiences.

10. Giving up after a failed test

What is a failed test?

If the variation you created did not perform better than the original version, it is deemed a failed test. This conventional belief is entirely wrong! A “failed” test is a source of numerous insights and learnings, and is a part of CRO practice. So, persevere. There are no failed tests – only winners or learning opportunities.

Here are a few questions you should ask if the variation does not yield an uplift:

- Was the hypothesis wrong? What was the expected result, and why was it unattainable?

- Why did the original version perform better?

- What did the test results teach you about your visitors?

- Is there any segment where an uplift was observed?

Conclusion

Failure in CRO does not mean you should quit. There is learning at every step. Take a systematic approach in every experiment you run and bear in mind the common mistakes shared in this post.

There is no straight path, no secret sauce for achieving the desired conversion rates. However, a consistent and diligent effort to learn, evolve, and become better every day at your game is where the ultimate value of CRO lies. So, hang in that space and keep exploring.