The following article derives source from Harvard Business Review’s case study about Booking.com’s hallowed experimentation culture.

1,000 concurrent experiments. Tests that can be deployed across 75 countries and 43 languages in under an hour.

A/B testing covering a span of 1.2 million hotels, homestays, and inns—if ever there was an organization that bleeds CRO from its cultural veins, it must be Booking.com.

Download Free: Conversion Rate Optimization Guide

Having pioneered Conversion Rate Optimization as a category, most of our e-commerce (and the world, at large) customers naturally pose poignant questions about distilling a CRO-centric culture in their organizations. More specifically, most questions encountered were about:

- Culture – “How can I reinvent our culture to worship CRO?”

- Process – “Is there a template we can follow? Are there any CRO best practices that should be followed?”

- Motivation – “With 9 out of 10 tests failing, how can we stabilize the morale of our marketing team?”

This article answers some of the questions by way of stories gleaned from the study and hopefully inspires your online store to embrace eCommerce CRO.

***

Booking.com serves as proof that CRO cannot merely serve as a growth lever: CRO has ascended to take the mantle of a fulcrum instead. Below, we summarize key insights from HBR’s comprehensive case study about what makes Booking.com an apex predator in the experimentation food chain.

Binding the threads together—decentralization,

heuristics on failed tests, the flywheel effect, and a steadfast reliance on

data.

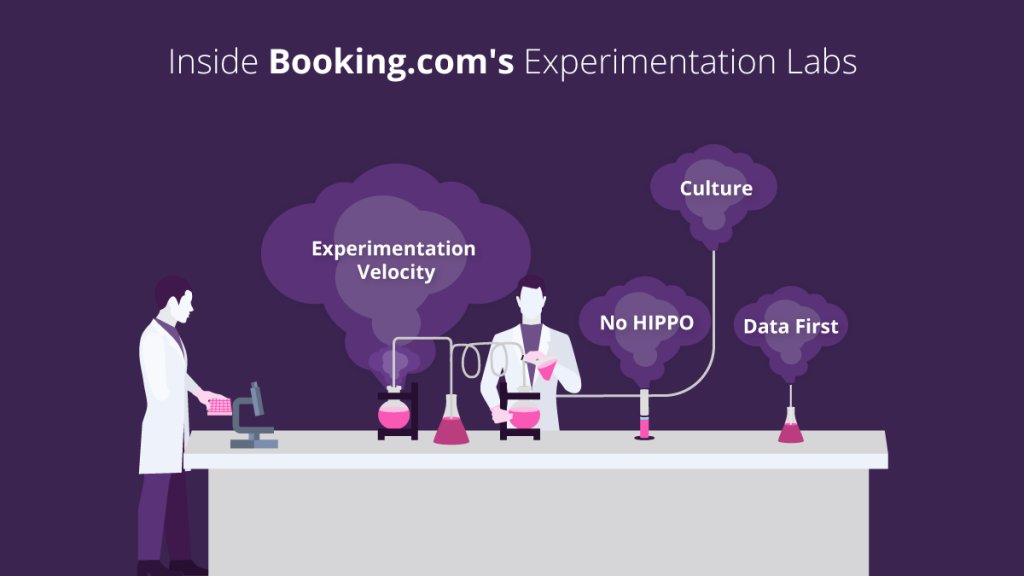

Decentralization

In Booking’s embryonic stage, a key tenet paved the way for much of its currently prevalent experimentation culture – all employees had the freedom to run an experiment without feeling trapped in the rut of decision-making bureaucracy. They did not have to draft a meeting agenda, defend hypotheses around the test, and answer uncomfortable HIPPO questions about the context for implementing a particular test. There were several direct and indirect benefits of a decentralized outlook toward CRO.

- Volume: The collective volume of experiments witnessed an exponential uptick. At the time of writing the case study, Booking reported a frequency of 1,000 concurrent experiments.

- Power of compounding: Similar to your average stock market portfolio, Booking was a recipient of windfall gains, owing to the power of compounding by continuously investing in an “infinite testing loop.” Assuming an industry standard of 10% success rate at an average 1% uplift in revenue per test, the following are some total revenue uplift statistics around various concurrency ranges.

| Number of concurrent tests | Success Rate | Revenue Uplift Per Test | Number of Successful Tests | Total Revenue Uplift (Revenue Uplift Per Test * Number of Successful Tests) |

| 100 | 10% | 1% | 10 | 10% |

| 500 | 10% | 1% | 50 | 50% |

| 1000 | 10% | 1% | 100 | 100% |

| 1500 | 10% | 1% | 150 | 150% |

| 2000 | 10% | 1% | 200 | 200% |

- HIPPO takes a backseat: Some leaders tend to believe that their understanding of customers is stronger than employees downstream. Booking humbled many a leader by ensuring that no changes suggested by them were published without an A/B test proclaiming victory. In fact, their first American CEO was served empirical evidence of Booking’s testing culture when a logo he suggested did not enter production without a test validating its success.

“When Booking’s previous CEO first arrived from the US, he presented a redesigned logo to the staff. People said “that’s great; we’ll check it with an experiment.” He was baffled but had no choice. The experiment would determine if the logo could stay.”

Excerpt from the study

Investigating Failures: Gold at the Far End of the Rainbow

Unlike a typical CRO environment where failed tests are archived never to be looked back again, Booking has a novel lens to view failures. Through a combination of heuristics and qualitative data, the experimentation team analyses failed tests to unearth more behavioral signals.

For example, in one of their many failed experiments, the team tried to analyze the impact on conversions by showcasing “WiFi Signal Strength” for all properties. Their hypothesis was valid; guests, especially business travelers, prioritize Internet speed as one of their primary booking criteria. The test tried to measure conversion impact by displaying a banner “WiFi Strength – Strong” on the listing. Much to their surprise, the test failed to deliver a conversion uplift. However, the experimentation team did not stop there. By interviewing consumers in their Research Lab, another fascinating insight stood out—guests wanted to know if the hotel’s WiFi would allow them to watch Netflix or deliver emails without interruption. In scientific parlance, the team approached the problem from the Jobs To Be Done standpoint. Internet wasn’t important—jobs done through the Internet were. Almost immediately, the team ran another test; this time with labels like “Fast Netflix Streaming.” The new test drove the team back to its winning ways by delivering comprehensive wins against the control.

“For example, we were sure people cared about the quality of WiFi in their hotel rooms. We tested a feature that displayed WiFi speed on a 1–100 scale, and customers did not care. It was only when we showed whether the signal was strong enough to do email or watch Netflix, that customers responded favorably.”

A/B Testing: The Flywheel Effect

On a scale of strategic to tactical, a long tail of companies pit CRO in the middle while the normal distribution of the curve veers toward tactical. We have documented myriad reasons why customers purchase a CRO platform for the first time:

- “We have a website redesign underway. We want to evaluate if visitors can navigate (the new design)” – Midway

- “We spent $100,000 on new ad campaigns and visitors aren’t converting!” – Tactical (and reactive!)

- “Our new Chief Product Officer has suggested new versions of in-app nudges. We are not too sure (of them working)” – Tactical (and vengeful!)

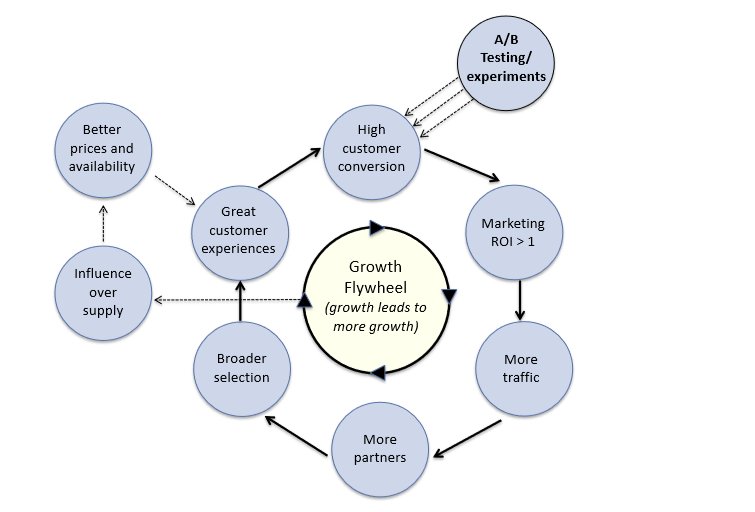

Not Booking; it reinvented the playbook. From the day of Booking’s inception, A/B testing has been perceived as the treadmill that introduces a flywheel effect for revenue, as explained below.

Ensure Great Customer Experiences –> A/B Testing –> Improve Product Experience –> Increase Conversions –> More Word of Mouth –> Better ROI on Marketing Campaigns Through Experiments –> More Sellers Willing to Be a Part of the Platform –> More Inventory at Better Rates –> Great Customer Experience

Download Free: Conversion Rate Optimization Guide

In God We Trust, for International Expansion, Bring Data

Most international expansions are a non-cognitive exercise. After organizations have decided to expand their footprint to international waters, the country’s financial (or political) capital is generally chosen as the headquarters. Quite naturally, when Booking.com announced its arrival in Germany, most analysts and industry watchers expected to meet them in Berlin.

They were in for a disappointment. Mining troves of customer search data, the team quickly came to an unexpected conclusion—Dutch nationals (Booking originated in Holland) were making a beeline for a Ski hamlet in an obscure, remote hinterland named Winterberg. The revelation came as a surprise, and senior executives who had started hunting for lodging in Berlin had to change plans overnight. Booking.com chose Winterberg as the face of its German operations.

“We operated only in Holland when I started. Our country is so small but Dutch people travel abroad quite a lot. To follow demand, we built an international platform, while our competitors in larger countries focused on their home markets. Conventional wisdom suggested to start in Berlin where you expect most Dutch tourists. But we decided to check which city comes up first in customer searches. It turned out to be a village called Winterberg, a ski paradise for the Dutch. So we followed the data and open our first office there”

***

Frameworks, mental models, templates—in our yearning for a standardized CRO toolkit soaked in proven success, we are guilty of ignoring that templates are akin to DNA; no two can be the same. Booking.com embedding an experimentation-centric way of life might have multiple contexts (cultural, founder ideologies, core team principles, and/or sheer bootstrapping-induced “hustle”)—merely replicating the Booking school of thought might lead to an unhealthy disruption.

Instead, organizations should strive to document their unique A/B testing-induced flywheel as a part of an initiation to future CRO programs. Booking understood that A/B testing goes beyond conversions; CRO had the potential to shift the travel ecosystem. It was a win-win—customers and sellers won collectively.

How would your A/B testing flywheel look like?