The following is an interview with Michal Parizek, Senior eCommerce & Optimization Specialist at Avast (a leading antivirus software company). Michal is a Conversion Rate Optimization (CRO) expert, having over seven years of experience across multiple industries.

Download Free: Conversion Rate Optimization Guide

Michal Parizek, Senior eCommerce & Optimization Specialist at Avast

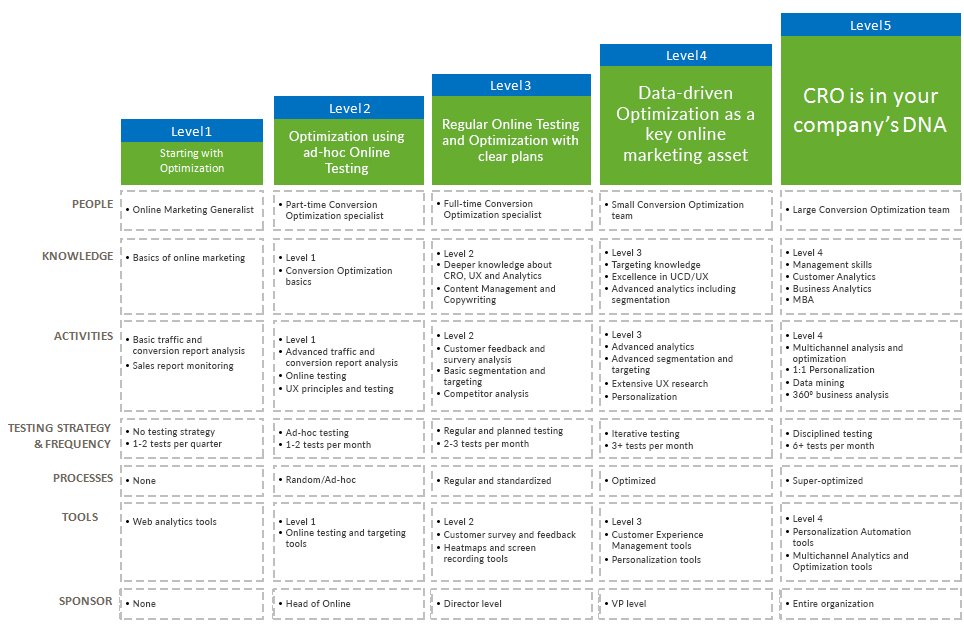

Michal has created the popular Conversion Rate Optimization Maturity Model, where he illustrates the core assets of a successful CRO program for organizations operating at different scales. Using the model, organizations can understand the current state of their CRO efforts and identify ways to improve their programs.

This is how the CRO maturity model looks like:

The questions in this interview aim to bring out actionable tips for organizations trying to bring structure to their CRO program.

Let’s begin.

Regarding the People and Culture Required for CRO

1) What are the essential skills or capabilities needed in a CRO team?

CRO is a complex discipline. In my opinion, it is a combination of a few, quite different skills.

- One of them is analytics. To have a successful CRO program, you need to understand your data and derive insights.

- Another skill is the user experience design. Being able to prototype great user experience is important.

- Then marketing and copywriting—working on pricing strategies, composing an effective value proposition, and other relevant activities.

- Other useful skills include statistics, consumer psychology, email marketing, and so on.

CRO can benefit from a range of skill areas. Therefore, it is very difficult to find great CRO consultants.

When you are building a CRO team, you should make sure you essentially hire an analyst, a UX designer, and a marketer/copywriter. These three I see as the key to driving an effective CRO program and results.

2) What does Avast do to create and spread a culture of CRO within the organization?

CRO has a good position in Avast culture. We are keen on A/B testing every major change in our sales flows or website. Data-driven decisions outbalance the gut-driven ones, even though there is a room for improvement. When I think about the reasons, I think there are mainly three things:

- No data silos: Everyone can have access to pretty much every data in the company.

- Sharing knowledge: We have been practicing A/B testing in the company for over four years, and the practice is now deeply rooted in our eCommerce department. Senior employees share their knowledge with the newcomers and help to spread the CRO culture.

- Effectiveness: Particularly when Avast was much smaller than today, we counted literally every penny we spent. (Was the initiative worth the cost? How much did the $1,000 investment in that partnership return us?) Being small is an advantage since you need to monitor your spend and earning closely, and it naturally forces you to be more data-driven.

Regarding the Importance of a Sponsor

3) How does the absence of a sponsor affect an organization’s CRO program?

It makes things more difficult. It is not just about the budget, but also about time and resources. You are fighting two enemies at the same time, low conversion rate and your boss. It is not very easy to practice CRO in such circumstances. In the end, to be successful, you need to get the buy-in from the sponsor—either your boss or the management.

4) How can you convince the top management to back your CRO program?

From my experience, the strongest argument for a CRO program was always the “results.”

Speak in terms of dollars. Prove how much money your organization gained because of the CRO efforts. And explain how much money your company can gain in the future if you get more resources, time, or money. Don’t forget that tests with negative results are equally powerful since you can argue how much money you can lose if you did not practice A/B testing.

Download Free: Conversion Rate Optimization Guide

Regarding the Research Methodology in CRO

5) What is the importance of pre-test analysis or research?

It is the absolute key. If you just throw ideas to your A/B testing tool, your success rate will suck and you will waste time and resources. Arriving at hypotheses, scientifically, is essential. (In my previous job at Liberty Global, we did not pay a lot of attention to research and we were not very successful in our CRO activities.)

Also, the pre-test analysis can help you identify the test feasibility—if you are able to get results in a meaningful time and/or if you know how many variants you can afford to have.

6) What are the essential tools required for the research?

You can segregate them as quantitative and qualitative:

- The quantitative tools include analytics, reports, heatmaps, and session recordings. They help you identify where the problem is.

- On the other hand, the qualitative tools help you find out why people take certain actions on a website. These include usability testing, card sorting, surveys, interviews, focus groups, and so on.

7) What are some of the basic mistakes people often make with pre-test analysis?

The biggest (and a common) mistake is the absence of a pre-test analysis altogether. Then there are, I believe, the other common analysis mistakes: wrong interpretation of metrics, sampling issue ignorance, common sense absence, no test feasibility analysis, and more.

Every test specification should contain the research part—explaining why a particular test should be executed and what insights led you to the test idea.

From my experience, tests which have a solid research in the background have a higher success rate than the tests without any research.

Regarding A/B Testing Practices

8) How important is it to keep a long-term calendar for testing experiments?

A test calendar helps to focus on important tests being launched on time. It is also vital for resource planning and for bringing all stakeholders in the loop. We usually do a quarterly overview of what tests we’d like to run and then we specify and add details on a monthly basis.

9) How do you prioritize tests?

I often use a rather simple formula. First, I list all the possible tests we could run in a certain timeframe. Then, to every test I add two estimates:

- Effort: How many hours/days are required to execute the test? (It’s even better if you can translate that into monetary values.)

- Impact: How much money will be returned if the test is successful. Rather than thinking if the test can increase conversion rate by 10% or 15%, pay attention to where the test is running and what element you are changing. Do a pre-test analysis and calculate how many conversions a particular page generates. By using research or common sense, identify the importance of the changes. For example, in most cases, changing prices will have a bigger impact than changing button colors on a homepage. After you have executed several A/B tests, you will get an idea what matters and what does not. And this will help you set better expectations.

When all your tests are listed with effort and impact estimates, congratulate yourself. Now, it is easy. You execute the tests with the highest impact and least effort first. Then in the long term, you focus on the tests with a high impact, but also with great effort. In the meantime, you can execute the tests that don’t have a huge impact, but are easy to launch. And, you avoid ideas that require a lot of effort, but have almost no impact.

10) Can failed/inconclusive tests still provide value?

Yes, they can! If the test is designed and executed correctly (variants differ in one element, flawless measurement, sufficient data, no bugs in all variants, and so on), it provides great value regardless of the results. As long as you can learn from a test, it is a good test. Failed tests help you see which way you should not go. Inconclusive tests (again, if the tests are done correctly) tell you that perhaps the testing element does not matter much and you should test something else.

I really like what Ron Kohavi says,

“A valuable test is when the real results differ from your expectations. You learn the most in these cases and the learning matters the most in the long term.”

11) What are the common post-test analysis mistakes?

One of the common cro mistakes is not making sure if the test results are trustworthy or statistically significant. We sometimes tend not to analyze these thoroughly. The XX% lift always sounds appealing and we want to believe it (particularly when we have also designed the test—that’s why it is wise to always have somebody else to have a second look). But do we have enough data? Are the results consistent with time? Do we have traffic split balanced? Is the conversion lift driven by the change in design or just by chance? If we ignore chasing these findings, we can easily implement changes, which may not have any effect, or even worse, may decrease our performance.

The other common issue is not monitoring post-test results in the long term. Do we see the XX% lift after the winning variation has been implemented? Once we have a successful test, we tend to switch our attention to another issue and another test and we forget to monitor the previous effect.

Regarding CRO Tools

12) What are the key attributes based on which a CRO tool is chosen?

Usually, costs and benefits are the main attributes we look at. You must look at both costs and benefits from a wider perspective. Costs are not only the money you pay for the tool, but you also need to include implementation cost and maintenance cost (including the extra staff you need to hire to work on the tools).

Trying to estimate the business impact of the benefits of these tools is often challenging. Many CRO tools focus primarily on driving insights, and it is difficult to evaluate these in terms of $. Fortunately, many tools offer free trial versions, so you can get an idea on how useful they can be for you.

13) When would you say an organization should invest in developing in-house CRO tools?

When a tool is key to your business, and the cost of developing (and maintaining) the in-house tool outweighs the cost of having a third-party tool.

Regarding Coordination Between Teams

14) Does the CRO team need to coordinate closely with any other team in an organization?

It does need to cooperate with several teams. From my perspective, the following two are the key.

- First, they need to have a close relationship with the business intelligence department to get correct data.

- Second, they need to have a dedicated team of developers to execute the ideas that CRO team creates.

- Then, there are many other vital cooperations. A support team has always been a great source of customer feedback; and for a CRO team, it is wise to be in touch with them. The product itself is a conversion asset so be in close touch with a product management team. How the product is marketed often defines the quality (and quantity) of leads and website visitors. Therefore, marketing is then another team I recommend being close to.

Your Thoughts

What do you think about the essential assets of a successful CRO program? Do you have any more questions for Michal?

Write to us at marketing@vwo.com