Enterprise A/B testing platforms can cost thousands a month before you’ve validated a single hypothesis.

Open source tools change that equation.

They make it possible to start testing early, stay in control of your data and infrastructure, and build experimentation maturity without upfront platform costs.

This guide walks you through the best open source A/B testing tools available in 2026, what to look for when choosing one, and when your needs extend beyond them.

What are open source A/B testing tools?

Open source A/B testing tools are experimentation platforms where the underlying code is publicly available. Unlike proprietary SaaS tools, you can inspect how the system works, modify it to fit your needs, and self-host it on your own infrastructure.

This matters for several reasons. You’re not locked into a vendor’s pricing model. You control where your test data lives. And when a tool doesn’t work the way you need, you can change it.

Most open source testing tools are developer-oriented. They rely on SDKs, feature flags, or server-side integrations rather than visual drag-and-drop editors, making them highly flexible but requiring technical involvement to set up and maintain.

Why use open source tools for A/B testing?

1. Cost efficiency at scale

Open source tools come with no licensing fees or usage-based pricing, making them a strong fit for early-stage teams or organizations running experimentation programs. Instead of limiting the number of tests by pricing tier, teams can run experiments freely.

2. Full data control and privacy

With a self-hosting option, all experiment and user data stays within your own system. This is important for teams that must comply with strict requirements, such as GDPR or HIPAA, where handling third-party data can be risky.

3. Flexibility across tech stacks

Open source tools are built to adapt. You can use your preferred programming languages, front-end libraries, and data pipelines to connect them across multiple platforms, custom architectures, or non-standard environments.

4. Built-in feature management capabilities

Many open source tools double as a feature management platform, supporting:

- Feature flagging

- Controlled rollouts

- Kill switches

This lets product teams do both – experimentation with release management in the same system.

5. Community-driven development

Open source tools are backed by active communities, so improvements often come from teams solving similar challenges, whether it’s bug fixes, integrations, or new features, keeping things constantly evolving.

6. Warehouse-native and data-driven experimentation

Open source tools can integrate with data warehouse platforms such as Snowflake, BigQuery, and Redshift, allowing you to run experiments on existing data models without syncing data to an external analytics platform.

These advantages become especially relevant if:

- You have a strong engineering team to own the setup and iteration

- Data privacy and governance are non-negotiable

- Your tests go beyond the user interface to include backend logic or APIs.

- You’re running a lean operation and need to optimize cost

- You want full control over your experimentation stack without vendor limitations

6 best open source A/B testing tools (2026)

1. GrowthBook

GrowthBook is an open source experimentation and feature management platform built for teams that already work with a data warehouse. It runs experiments directly on your existing metrics by connecting to systems like BigQuery, Snowflake, or Redshift, reducing the need for a separate analytics platform.

Available as a self-hosted or managed cloud solution, it also includes built-in Bayesian and frequentist statistical engines for experiment analysis using your own data models.

Features

A/B testing, Linked feature flags, Visual editor, URL redirects, Multi-arm bandit, Goal metrics, Granular targeting, Product analytics,

Pricing

- The starter plan is free for startups for up to 3 GrowthBook users

- Paid plans start at $40/user/month

Pros & Cons

| Pros | Cons |

| Reliable traffic splitting with seamless data flow to your warehouse, plus flexible user targeting that’s easy to implement and well-supported when needed. | The interface can feel a bit minimal, which may make initial setup and navigation less intuitive, especially for teams without technical support. |

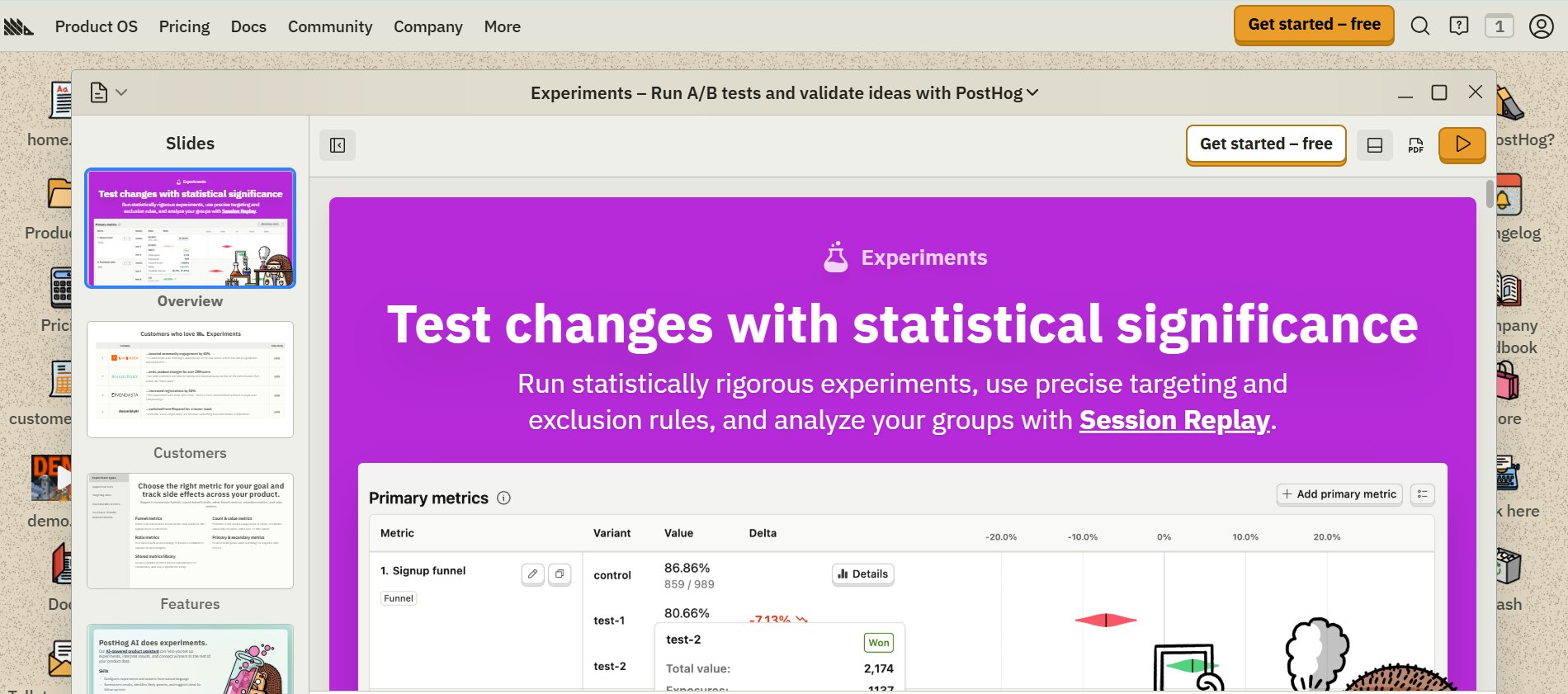

2. PostHog

PostHog is a developer-focused all-in-one analytics and experimentation platform that can be self-hosted or used via its cloud offering, depending on how much control you need.

Core features are available in the open source version, while some advanced capabilities are available only in its hosted plans (including a free tier).

Features

A/B testing, Session recordings, Heatmaps, Funnels, Feature flags, Customizable metrics, A/B/N testing, Redirect testing, Targeting, AI-powered product assistant, Bayesian & Frequentist engines, Integrated data warehouse

Pricing

- Free starter plan available, limited to 1 project

- Paid plans are based on usage after the completion of the free tier

Pros & Cons

| Pros | Cons |

| Flexible and reliable feature flagging, with support for targeting and conditional rollouts that work well in real-world use cases. | Requires some level of technical familiarity to navigate comfortably, which may make the initial experience feel a bit overwhelming for non-technical users. |

3. Unleash

Unleash is an open source feature management platform built primarily for feature flags and controlled rollouts, with experimentation layered in through custom metrics and integrations. It’s available as a self-hosted solution or via a managed cloud, giving teams flexibility based on their infrastructure and compliance needs.

Built with privacy and governance in mind, Unleash helps teams roll out features more safely, test changes with minimal code changes, and stay in control of how and where their data is managed.

Features

A/B/n testing, Custom targeting, User segmentation, Rollouts

Pricing

- 14-day free trial

- Self-service paid plans start from $75/seat/month

Pros & Cons

| Pros | Cons |

| Seamless data export to your warehouse, combined with robust, multi-language SDKs, significantly improves developer productivity and reliability. | The terminology around segments and constraints can take some getting used to, and features like SSO are limited to paid plans. |

4. Flagsmith

Flagsmith is an open source feature management and remote configuration platform that helps teams control releases across web, mobile, and server-side applications, with both self-hosted and cloud options.

It enables A/B and multivariate testing through feature flags, splitting users into groups, and sending data to your existing analytics stack for analysis so teams can keep experimentation within their own data systems.

Features

A/B testing, Multivariate testing, Feature flags, Segmentation, Staged feature rollouts, User traits

Pricing

- Free plan for up to 50,000 requests per month.

- Paid plans start at $40/month and are billed annually.

- A 14-day free trial is available on the paid plan.

Pros & Cons

| Pros | Cons |

| Integration with the OpenFeature standard enhances testing flexibility and helps reduce the risk of vendor lock-in. | Requires developer effort to clean up feature-flag code after full rollout, adding maintenance overhead. |

5. Mojito

Mojito is a lightweight, open source split-testing setup for teams that want full control over experimentation. It’s fully source-controlled, so experiments are created, versioned, and deployed through Git and CI workflows your team already uses.

With its modular approach: handling bucketing, tracking, and analysis separately, you can plug in your own data and reporting tools, while its minimal, dependency-free design keeps performance overhead low.

Features

Lightweight front-end library (5.5 kb), error tracking & handling, Self-host experiments, Rmarkdown reports, Segmentation tools

Pricing

- Pricing not publicly disclosed

Pros & Cons

- No widely documented pros and cons found

6. FeatBit

FeatBit is an open-source feature flagging and experimentation platform that helps teams release features in stages, test changes directly in production, and roll back quickly without redeployment. With both self-hosted and cloud options, teams have flexibility in how they manage releases and experimentation.

Features

A/B testing, Advanced targeting & segmentation, Feature toggles, Real-time updates, REST API & SDKs

Pricing

- Online Infra

- Free up to 1000 MAUs

- Paid starts at $49/month

- Self Host

- The foundation plan is forever free

- Enterprise plan at $3,999/year

Pros & Cons

| Pros | Cons |

| Allows unlimited team members, projects, and environments in its free, open source version, making it easy to scale without additional cost. | The architecture can feel a bit complex early on; some teams may prefer a simpler setup, especially in the initial stages, with scope to streamline over time. |

Feature comparison of open source tools

| Tool | Primary Focus | Experimentation Depth | Feature Flagging | Analytics Capability | Data Ownership | Ease of Setup | Best Fit |

| GrowthBook | Warehouse-native testing | High | Yes | Warehouse-based | Full | Moderate | Data-driven teams |

| PostHog | All-in-one analytics stack | High | Yes | Built-in (strong) | High | Moderate | Product + growth teams |

| Unleash | Feature management | Moderate | Strong | Limited (via integrations) | Full | Moderate | Feature-rollout heavy teams |

| Flagsmith | Remote config + flags | Moderate | Strong | Limited (External tools) | Full | Moderate | Multi-platform release control |

| Mojito | Lightweight split testing | Basic-Moderate | Limited | Custom (DIY) | Full | Complex | Engineering-led teams |

| FeatBit | Feature flags + testing | Moderate | Strong | Basic-Moderate | Full | Moderate | Teams needing controlled rollouts |

How to choose the right open source A/B testing tool

Choosing an open source A/B testing tool isn’t about picking the “best” one; it’s about selecting the one that fits your data setup, experimentation workflow, and team structure. A good way to approach this is to evaluate your decision across a few key layers:

1. Start with your data infrastructure

If your team already has a data warehouse like BigQuery, Snowflake, or Redshift, tools like GrowthBook tend to fit in more naturally. You’re running experiments on top of the data definitions you already trust, instead of duplicating metrics elsewhere.

2. Match the tool to your team structure

PostHog works well by combining analytics and experimentation in one place for a mixed team of engineers, analysts, and product managers. Smaller, engineering-led teams with fewer non-technical stakeholders can get surprisingly far with GrowthBook’s open source, while tools like Mojito are well-suited for teams comfortable managing a more custom, code-driven setup.

3. Account for compliance requirements first

Tools like Unleash and FeatBit offer strong control over data handling, deployment, and access, which can support teams in regulated fields such as finance and healthcare. However, compliance ultimately depends on how the tool is implemented within your infrastructure.

4. Factor in long-term maintenance

Tools with active GitHub communities (GrowthBook, PostHog, Unleash) get regular updates, security patches, and contributions from the community. Others can work well, but may need more effort to sustain at scale.

5. Consider hidden costs

The tool itself might be free, but running it isn’t. The infrastructure and engineering time, the overall effort can add up quickly, sometimes more than expected.

Common open source A/B testing challenges

Open source A/B testing tools offer control, but they also come with trade-offs that show up quickly as experimentation scales:

- Multi-tool dependency: Most teams end up putting together a testing tool, an analytics platform, and a reporting layer, which slows execution and makes scaling harder.

- Slower experimentation cycles: No visual editor means developers must make most changes, which can slow iteration, especially for web tests.

- DIY experiment analysis: Your data stack often affects how you analyze it. That means preparing reports, checking for statistical significance, and ensuring that all experiments are the same.

- Engineering dependency: Most open source tools require developer involvement from setup through execution, making it difficult for non-technical teams, such as marketing or UX, to run experiments independently.

- Ongoing maintenance: From infrastructure to updates and integrations, ownership stays with your team. Over time, maintaining and scaling that setup requires ongoing effort.

Best practices for open source A/B testing

Define experiments before you run them

Start with a clear hypothesis, success metrics, and expected impact. Without this, even well-run tests won’t lead to meaningful decisions.

Keep your data consistent

Since analysis relies on your own data stack, standardize metrics, event tracking, and data models across experiments to avoid inconsistencies.

Size and run experiments correctly

Before you launch, figure out how large a sample you need and run tests long enough to achieve statistically significant results. Calling tests early is one of the fastest ways to make incorrect decisions at scale.

Treat experimentation as a system

Open source works best when the experimentation process is structured and repeatable. Define clear workflows for running, analyzing, and documenting across your team.

Clean up flags after experiments conclude

Feature flags and test code pile up faster than expected. Clearing them out once a test is done helps keep things manageable and avoids unnecessary complexity later on.

Conclusion: When open source is no longer enough

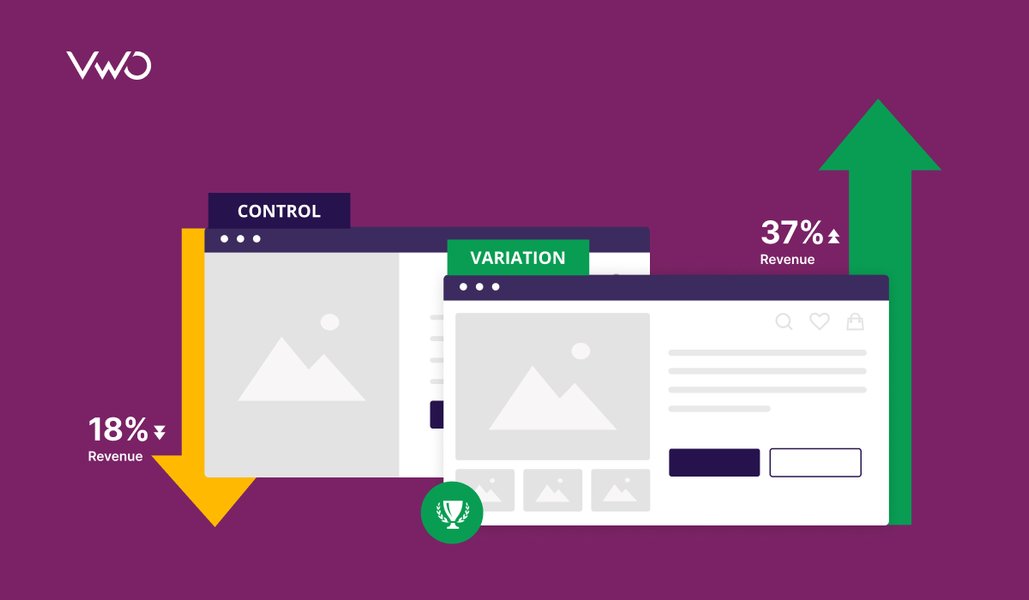

As your experimentation program matures, speed becomes a competitive advantage. The need for faster iteration, combined with gaps in behavioral analytics and hypothesis management, often pushes teams toward purpose-built platforms.

VWO bridges this gap by unifying the entire experimentation lifecycle on a single platform, rather than swapping one point solution for another.

VWO Testing enables teams to run A/B, multivariate, and split URL tests through a Visual Editor, so marketing and UX teams can launch experiments without relying on engineering for front-end changes.

Vandebron put this to work by using VWO Insights to identify friction in their sign-up flow’s date-of-birth field, then testing a simpler version.This change led to a 16.3% increase in sign-ups along with a significant drop in abandonment.

For advanced experimentation use cases, VWO Feature Experimentation supports server-side experiments, feature flagging, and controlled rollouts via SDKs, giving product teams feature management flexibility without sacrificing experimentation rigor.

Further, by analyzing how different audience segments respond during post-test report segmentation, VWO Personalize helps you extend tailored experiences to relevant user groups. This makes it easier to turn test insights into targeted personalization campaigns without rebuilding experiences from scratch.

Identify audience segments automatically with VWO Copilot by analyzing how different groups respond to test variations and influence key metrics. Manually uncovering these insights can be time-consuming, but Copilot does it automatically, highlighting valuable audience segments that you might otherwise miss. These insights can then be used to personalize campaigns or plan follow-up experiments for specific segments.

Ready to go beyond open source? Start your free trial or book a demo with the VWO team.

FAQs

Yes, tools like GrowthBook, PostHog, and Unleash are built to handle high-traffic environments, but performance at scale depends heavily on your infrastructure setup and how well the tool is configured and maintained.

Start with how your team is set up: technical bandwidth, data setup, and any compliance needs. Those three things usually narrow down your options much faster than comparing features.

Most open source tools are free to use, but costs can arise from infrastructure, maintenance, and the engineering effort required to run and scale them.