Take a look at the history of technological progress.

You can see that advanced technology did not come out of the blue. It evolved with one advancement becoming the foundation for another.

For instance, the smartphone industry stands on the foundation of numerous technological breakthroughs. From the initial landline telephones, the concept of cordless phones emerged, followed by the integration of mobile communication with computing power.

Over time, we witnessed an evolution from personal digital assistants, such as BlackBerry devices, to the advent of the iPhone, which paved the way for the smartphone industry.

It’s like a loop, where each advancement created new opportunities that, in turn, lead to further progress. The loop has revolutionized our technology because we never left a loose end after an advancement.

What if we followed the same approach toward experimentation on digital properties?

Experimentation can sometimes lift your conversion rate beyond expectations and at times drop even for a promising hypothesis. It’s part and parcel of the process.

But if you stick to a linear approach of closing the test after getting results and move on to test something new, it will rarely give you breakthroughs. You’ll miss out on chances to improve conversion rates and overlook valuable insights for future success. In the best-case scenario, it will plateau your growth rate.

That is why it’s time to move on from the linear approach and take a strategic approach with the Experimentation Loop to realize the true conversion potential of your websites and mobile apps.

But what is an Experimentation loop? Let’s delve into this fascinating concept.

What is an Experimentation Loop?

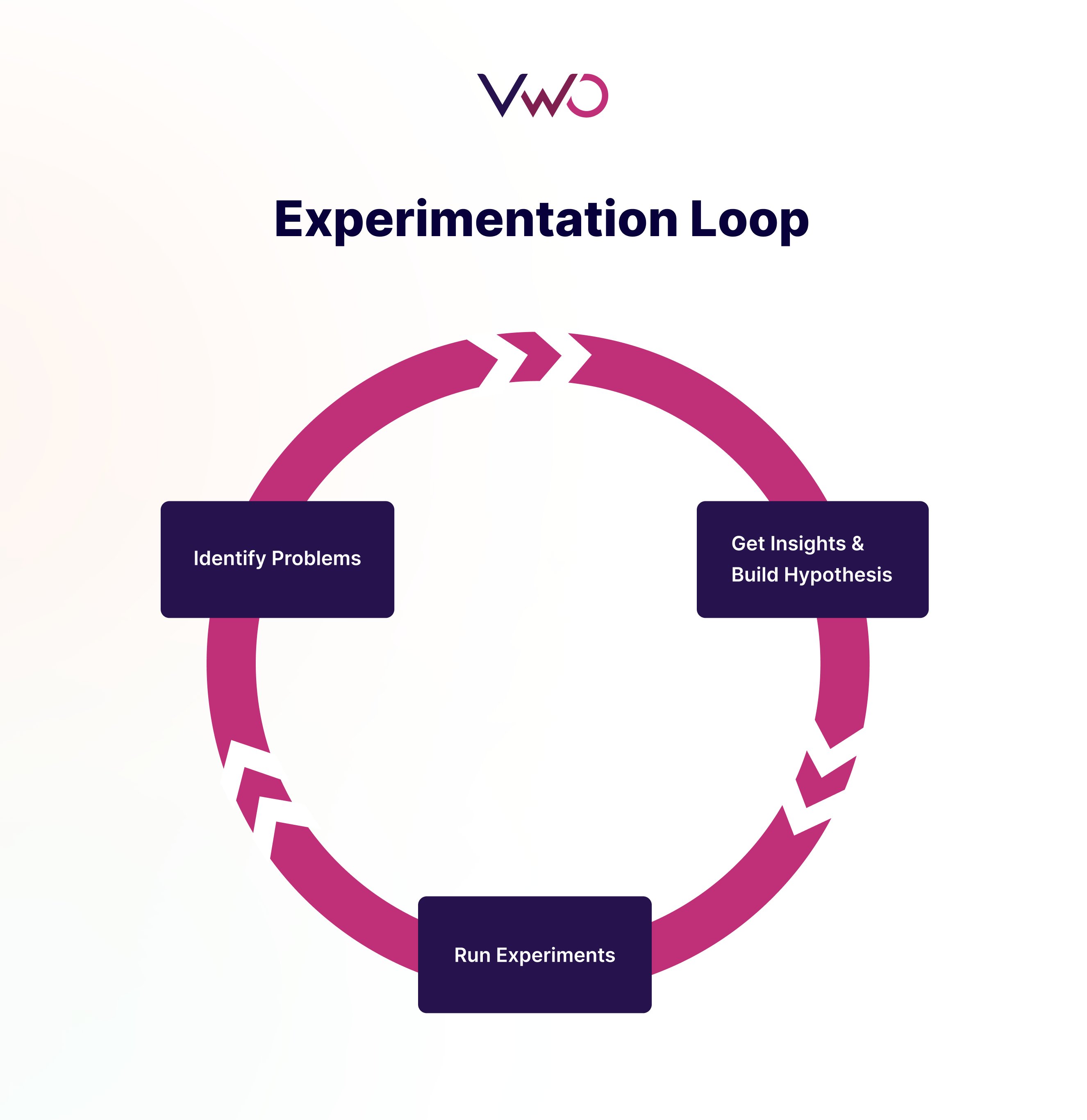

An Experimentation Loop starts with identifying a problem through behavior analysis and creating a solution in the form of a hypothesis. Then, you run experiments to test the hypothesis. You either win or lose, but with a linear approach, you stop the experimentation cycle here. But with the Experimentation Loop, you investigate the test results to uncover valuable insights. The uncovered insights can derive new hypotheses, which lead to further experiments, creating a continuous cycle of learning and optimization.

Here’s a visual illustration of how the Experimentation Loop works:

With Experimentation Loops, you are not just stopping at the results but diving deeper to understand the reasons behind the results, identifying anomalies, and discovering if particular audiences (or participants of the experiment) react differently from others. This becomes the foundation for your new hypothesis and experiments.

It is especially critical in today’s ever-changing digital landscape, where user behavior is constantly evolving. By embracing the continuous learning and optimization provided by Experimentation Loops, you can stay ahead of the curve and keep improving your conversion rate.

Understanding the Experimentation Loop with an example

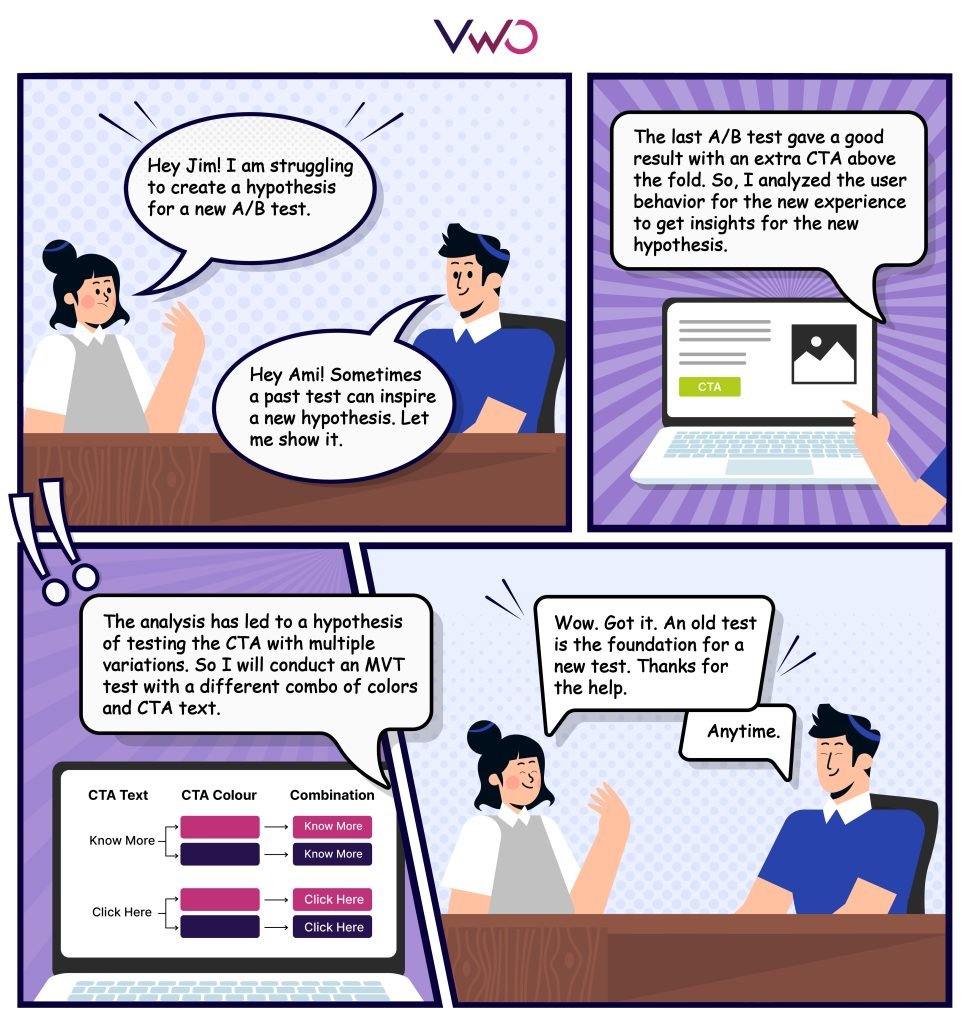

Here is a hypothetical example that explains how the Experimentation Loop functions:

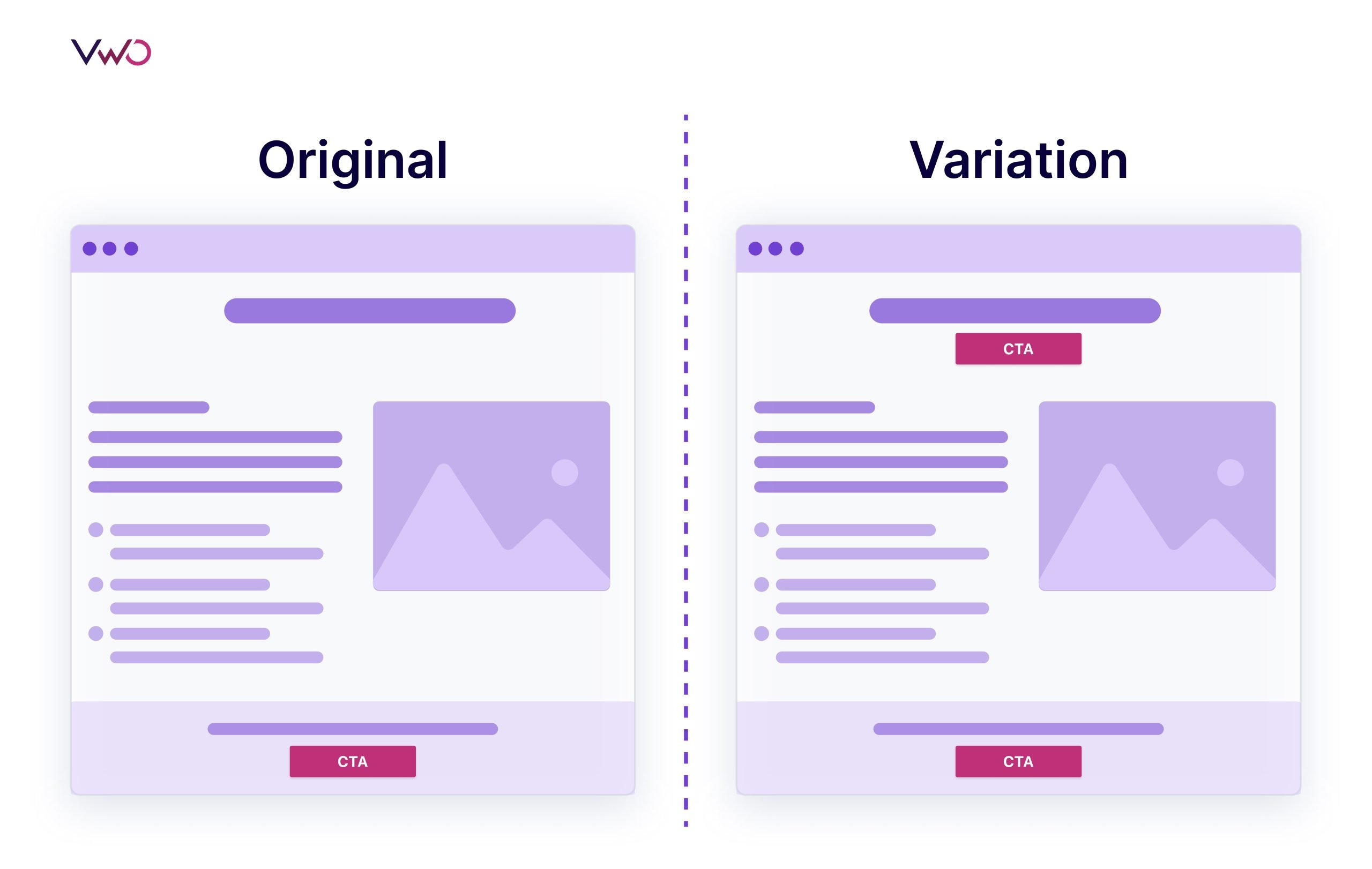

Consider a landing page created with the intent to generate leads. The original version of the page has a description of the offering in the first fold, followed by the call-to-action (CTA) button that will lead to the contact form.

Let’s say that the behavioral analysis of the landing page reveals many visitors dropping off on the first fold. This leads to the hypothesis of adding a CTA above the fold to improve engagement. This way, you create an A/B test to compare the original version and the variation with additional CTA above the fold.

Here is the visual representation of the original and the variation of the landing page:

Let’s assume that the test ends with the variation outperforming the original in terms of the conversion rate (i.e. number of clicks on the CTA). Here, the traditional approach concludes the test. But with the experimentation loop, we will try to analyze the results to come up with more hypotheses and open up multiple opportunities for improvement.

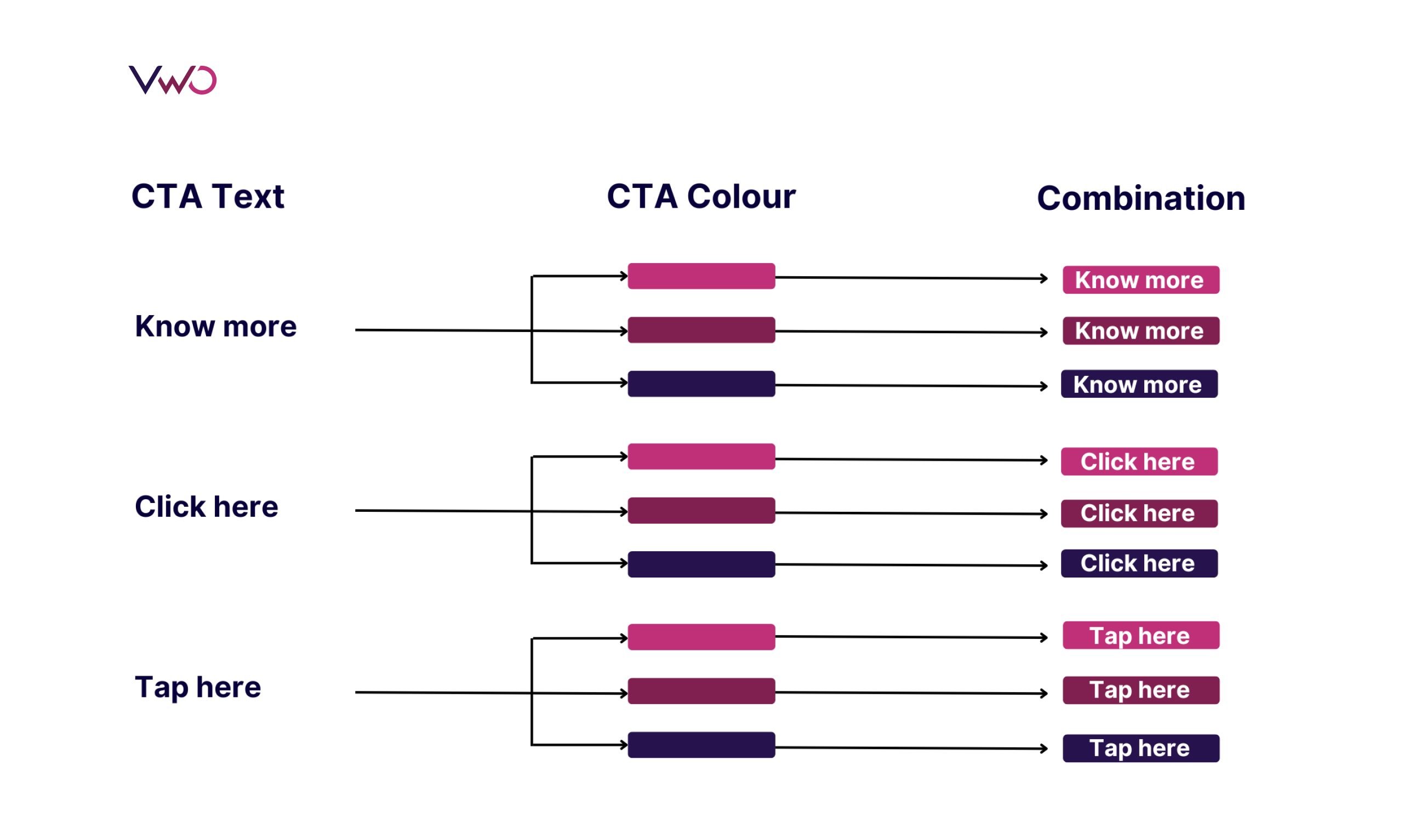

Suppose, we zero down on the hypothesis that demands testing the CTA button. Then, the second round will involve coming up with multiple variations of the CTA text and CTA color to optimize the button. Here, to find out the best variation, we can run a multivariate test to compare the original version and multiple variations with different combinations.

At the end of the test, there can be an uplift in conversion, which would have not been possible with the traditional approach. And if the test fails to get an uplift in conversion rate, it will lead to insights that can help in knowing more about the users.

Likewise, we can check the results to know if a particular audience segment engaged with the button more than others (and if they have common attributes) – in which case, it could lead to a hypothesis for a personalization campaign that includes personalizing the headings or subheading before the CTA as per behavior, demographic, or geographic attributes of the segment.

Thus, an Experimentation Loop opens up the opportunity to improve, which is not possible with a siloed and linear approach.

But how can you carry out the successful execution of the Experimentation Loop?

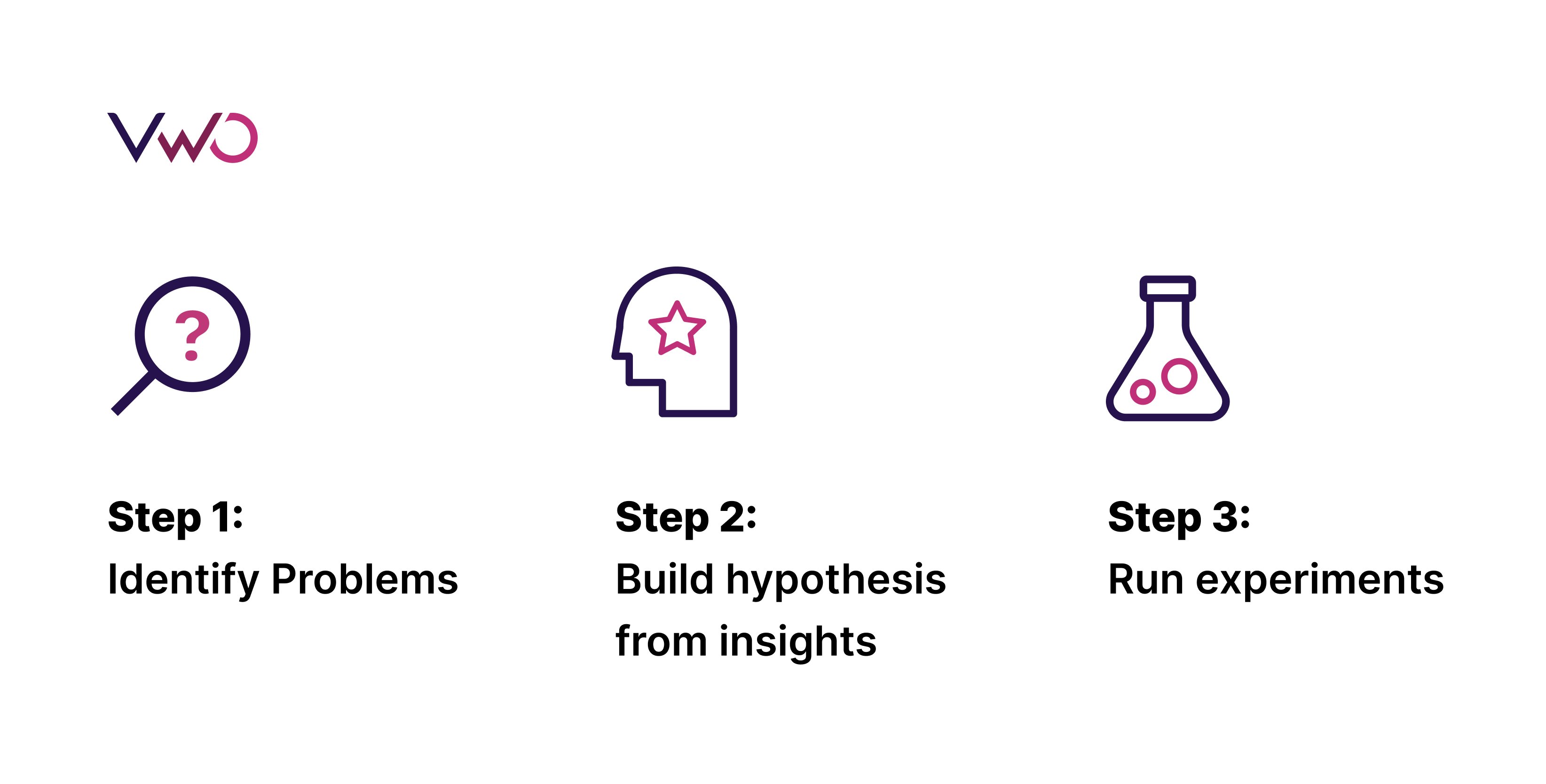

The experimentation loop consists of three steps, and we will delve into each of these steps in the upcoming section.

Three steps in the Experimentation Loop

Following are the three key steps in the Experimentation Loop for improving conversions.

Step 1: Identify problems

The Experimentation Loop starts with identifying the existing problem in user experience. First, you do a quantitative analysis that involves going through key metrics like conversion rate, bounce rate, and page views to identify the low-performing pages on the user journey.

Once you zero down on the weak links, you can do a qualitative analysis to understand the pain points. You can check session recordings and heatmaps to know the performance of each element that affects the conversion rate.

Once you identify the problem associated with the elements, it can help draft a hypothesis.

Step 2: Build hypothesis from insights

After identifying elements that are affecting the conversion negatively, you can start digging into the insight data to make sense of it.

For example, you identified the banner image position as the reason for the high bounce rate of the blog after all the quantitative and qualitative analyses. Then you can build a hypothesis about the position of this image that offers a solution for the high bounce rate.

While framing the hypothesis, you should specify the key performance indicator (KPI) to be measured, the expected uplift, and the element to test.

Next, you move forward to run the experiment.

Step 3: Run experiments

Based on the hypothesis, you choose from tests like the A/B test, multivariate test, split URL, and multipage test. You run it till the test reaches a statistical significance.

The test may result in a change in the conversion rate, and the insights about the user behavior toward the new experience can open doors to identify areas for the second cycle of the experimentation.

Thus, the Experimentation Loop will constantly carve a path to improve conversion.

Experimentation Loop and sales funnel

Running Experimentation Loops at every stage of the funnel can substantially improve the conversion rate and provide a strategic framework for testing hypotheses rather than a haphazard approach.

To enhance the conversion rate of the same element, you can run an Experimentation Loop, as seen in the example of A/B testing to Multivariate testing.

Alternatively, you can analyze the insights from a test that improved a metric to see how it affected other metrics, which could lead to the second cycle of the test.

For instance, let’s take the awareness stage. The goal in this stage is to attract users and introduce them to products or services on a digital platform.

Suppose you ran an A/B test on search ads to get more users to the website and monitored metrics like the number of visitors.

Let’s say the test led to an improvement in traffic. Now, you can move on to analyze other metrics, such as % scroll depth and bounce rate for the landing page, and identify areas for improvement. To pinpoint the specific areas where users are leaving, you can use tools such as scroll maps, heat maps, and session recordings. The analysis can lead you to create hypotheses for the second leg of the experiment. It could involve improving user engagement by testing a visual element or a catchy headline.

Likewise, running the Experimentation Loop at other stages of the funnel can optimize the micro journey that the customer takes at each funnel stage. Moreover, the Experimentation Loop can lead to hypotheses creation from one funnel stage to another, resulting in a seamless experience that is hard to achieve with a siloed approach.

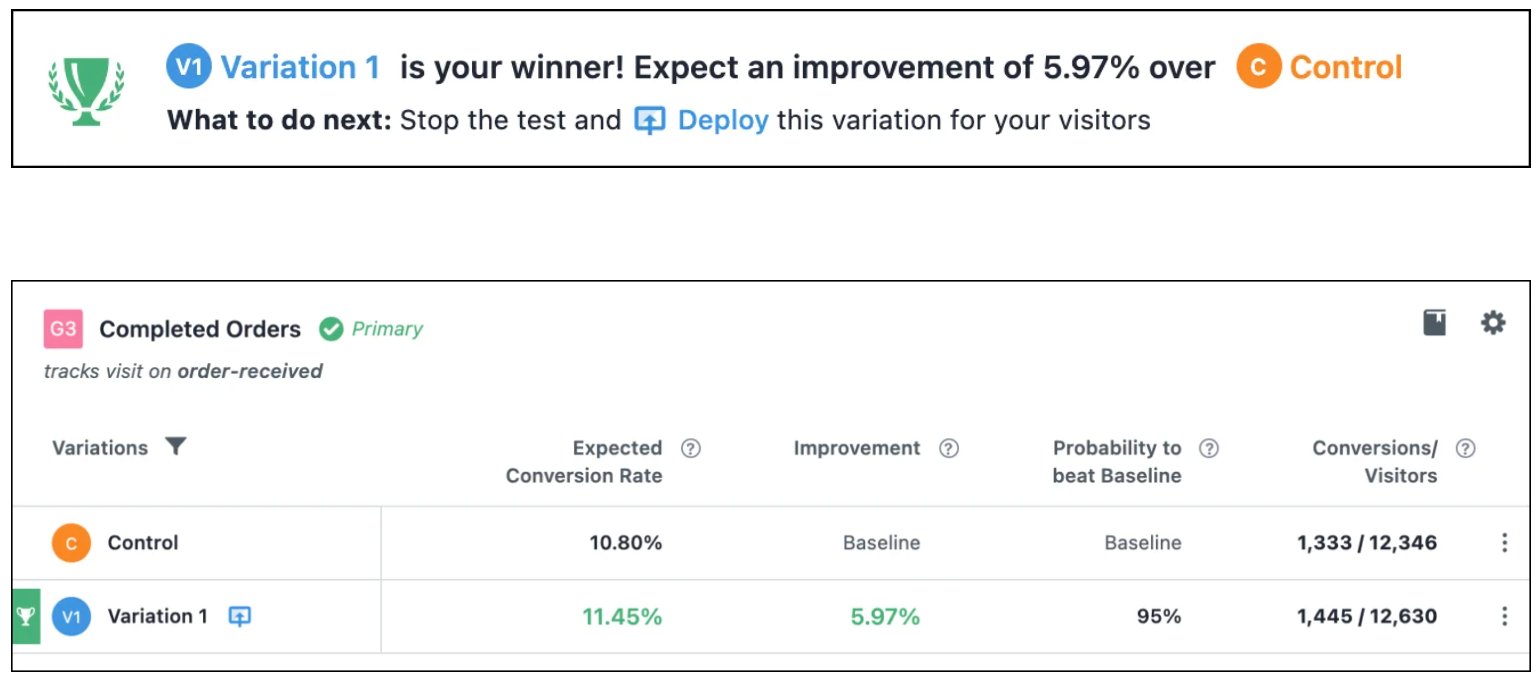

How Frictionless Commerce uses Experimentation Loops for conversion copywriting

Frictionless Commerce, a digital agency, has relied on VWO for over ten years to conduct A/B testing on new buyer journeys. They have established a system where they build new experiments based on their previous learnings. Through iterative experimentation, they have identified nine psychological drivers that impact first-time buyer decisions.

Recently, they worked with a client in the shampoo bar industry, where they created a landing page copy that incorporated all nine drivers. After running the test for five weeks, they saw an increase of 5.97% in conversion rate resulting in 2778 new orders.

It just shows how Experimentation Loops can bring valuable insights and take your user experience to the next level.

You can learn more about Frictionless Commerce’s experimentation process in their case study.

Conclusion

Embracing the continuous learning and optimization provided by Experimentation Loops is crucial for businesses looking to stay ahead of the curve and improve their conversion rates.

To truly drive success from your digital property, it’s time to break the linear mold and embrace the Experimentation Loop. By using a strategic framework for testing hypotheses, rather than a haphazard approach, businesses can continuously optimize and improve their digital offerings.

You can create Experimentation Loops using VWO, the world’s leading experimentation platform. VWO offers free testing for up to 5000 monthly tracked users. Visit our plans and pricing page now for more information.