You conducted an A/B test—great! But what next?

How would you derive valuable insights from the results of A/B testing? And more importantly, how would you incorporate those insights into subsequent tests?

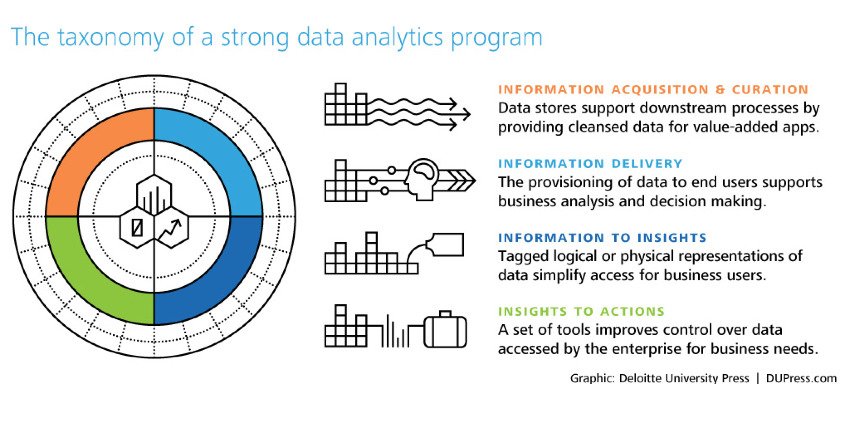

Acquiring information is perhaps the easier part of a data analysis program. Drawing insights from that information and converting those insights into actions is what leads to successful results.

Download Free: A/B Testing Guide

This post talks about why and how you should derive insights from your A/B test results and eventually apply them to your conversion rate optimization (CRO) plan.

Creating a standardized process for A/B test analysis

A positive result, a negative one, or even an inconclusive test all have something to tell you. A structured analysis process ensures you extract consistent, actionable insights from each one. Not just a one-time win. Here is a five-step process to make that happen:

- Step 1: Track the right metrics

- Step 2: Run post-test segmentation

- Step 3: Dig into visitor behavior

- Step 4: Maintain a knowledge repository

- Step 5: Apply the learning to your next test

Let us walk through each one in detail.

“It amazes me how many organizations conflate the value of A/B testing. They often fail to understand that the value of testing is to get not just a lift but more of learning.

Sure a 5% to 10% lift in conversion is great; however, you are also trying to find out what makes your customers say ‘yes’ to your offer.

Only with A/B testing can you close the gap between customer logic and company logic and, gradually, over time, match the internal thought process that is going on in your customers’ heads when they are considering your offer on your landing page or within your app.”

CEO of GreenPal

Step 1: Tracking the right metric(s)

When you are analyzing A/B test results, check if you are looking for the correct metric. If multiple metrics (secondary metrics along with the primary) are involved, you need to analyze all of them individually. Ideally, you should track both micro and macro conversions.

Most A/B testing platforms have built-in analytics sections to track all the relevant metrics. Moreover, you can also integrate these testing platforms with the most popular website analytics tools, such as Google Analytics.

Integrating VWO with a third-party tool is simple and allows you to push your VWO test data into the external tool. It also allows the data made available by these tools on the website to target campaigns.

VWO offers seamless integration with popular analytics tools as well as plugins for content management systems, which makes it very easy to install the VWO code and start optimizing your website.

“It’s important to never rely on just one metric or data source. When we focus on only one metric at a time, we miss out on the bigger picture. Most A/B tests are designed to improve conversions. But what about other business impacts such as SEO?

It’s critical to make an inventory of all the metrics that matter to your business, before and after every test that you run. In the case of SEO, it may require you to wait for several months before the impacts surface. The same goes for data sources. Reporting and analytics platforms aren’t accurate 100 percent of the time, so it helps to use different tools to measure the performance and engagement. It’s easier to isolate reporting inaccuracies and anomalies when you can compare results across different platforms.”

Founder at Beymour Consulting

Step 2: Conducting post-test segmentation

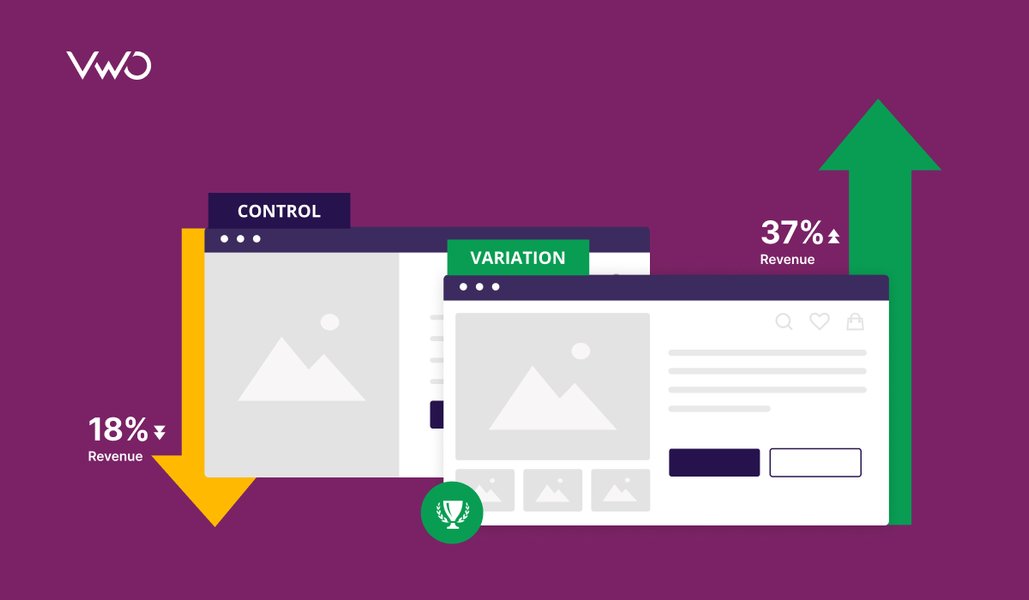

You should also perform segmentation of your A/B tests and analyze them separately to get a clearer picture of what is happening. The results you derive from generic nonsegmented testing will provide illusory results that lead to skewed actions.

There are broad types of segmentation that you can create to divide your audience. Here is a set of segmentation approach from Chadwick Martin Bailey:

- Demographic

- Attitudinal

- Geographical

- Preferential

- Behavioral

- Motivational

Post-test segmentation in VWO Testing allows you to deploy variation based on a specific user segment. For instance, if you notice that a particular test affected new and returning users differently (and notably), you will want to apply your variation only to that particular user segment.

However, searching through lots of different types of segments after a test means you are assured of seeing a lot of positive results just because of random chance. To avoid that, make sure you have your goal defined clearly.

Here’s how you can use the various segmentation options provided by VWO to slice and dice your website traffic and understand visitor behavior.

Step 3: Delving deeper into visitor behavior analysis

You should also monitor visitor behavior analysis tools such as Heatmaps, Scrollmaps, Visitor Recordings and so on to gather further insights into A/B test results. For example, consider a search bar on an eCommerce website. An A/B test on the navigation bar works only if users actually use it. Visitor recordings can reveal if users are finding the navigation bar friendly and engaging. If the bar itself is complex to understand, all the variations of it can also fail to influence users.

Apart from giving insights on specific pages, visitor recordings can also help you understand user behavior across your entire website (or conversion funnel). You can learn how critical the page on which you are testing is in your conversion funnel.

Download Free: A/B Testing Guide

Step 4: Maintaining a knowledge repository

After analyzing your A/B tests, it is imperative to document the observations from the tests. This helps you not only transfer knowledge within the organization but also use it for reference later.

For instance, you are developing a hypothesis for your product page, and want to test the product image size. Using a structured repository, you can easily find similar past tests that could help you understand patterns in that location.

To maintain a good knowledge base of your past tests, you need to structure them appropriately. You can organize past tests and the associated learning in a matrix, differentiated by their “funnel stage” (ToFu, MoFu, or BoFu) and “the elements that were tested.” You can add other customized factors as well to enhance the repository.

“At a previous company, I tracked tests in a spreadsheet on a shared drive that anyone across the organization could access. The document included fields for:

- Start and end dates

- Hypotheses

- Success metrics

- Confidence level

- Key takeaways

Each campaign row was also linked to a PDF with a full summary of the test hypotheses, campaign creative, and results. This included a high-level overview, as well as detailed charts, graphs, and findings.

At the time of deployment, I sent out a launch email to key stakeholders with a summary of the campaign hypothesis and test details and attached the PDF. I followed up with a results summary email at the conclusion of each campaign.

Per my experience, concise email summaries were well-received; few users ever took a deep dive into the more comprehensive document.

Earlier, I created PowerPoint decks for each campaign I deployed, but ultimately found that this was time-consuming and impeded the agility of our testing program.”

– Sarah Hodges,

Co-founder at Intelligent.ly

Step 5: Applying the learning to your next A/B test

After you have analyzed the tests and documented them according to a predefined theme, make sure that you visit the knowledge repository before conducting any new test.

The results from past tests shed light on user behavior on a website. With a better understanding of the user behavior, your CRO team can have a better idea about building hypotheses. This can help the team create on-page surveys that are contextual to a particular set of site visitors.

Moreover, results from past tests can help your team come up with new hypotheses quickly. The team can identify the areas where the win from a past A/B test can be duplicated. Also, the team can look at failed tests, know the reason for their failure and steer clear of repeating mistakes.

Tools for analyzing A/B test results at scale

Running tests is one thing, but extracting reliable insights from them at scale is another challenge. The right platform makes all the difference. Here are five tools worth considering for A/B test result analysis.

1. VWO

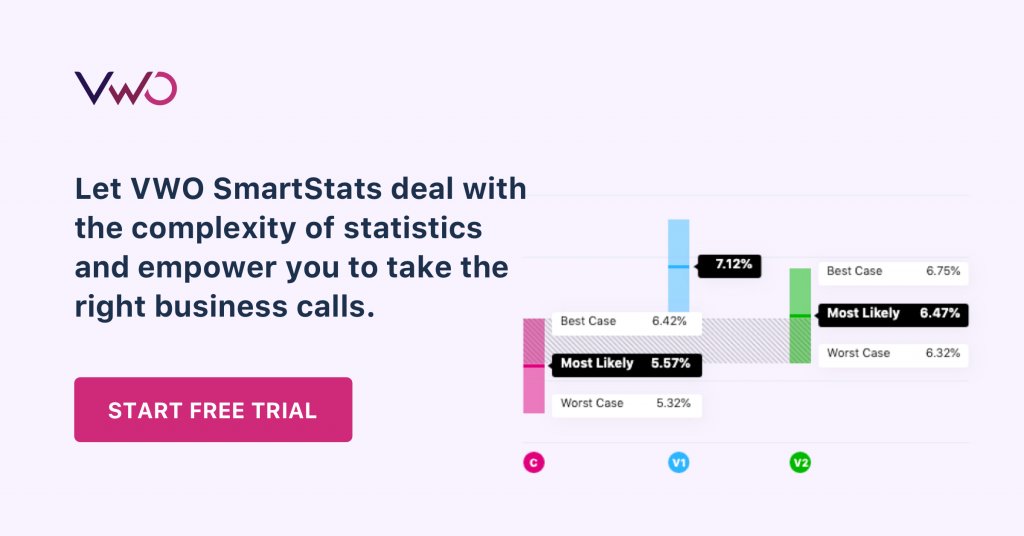

Runs on a Bayesian-powered SmartStats engine that accounts for common biases, such as sequential testing errors. It also offers editable statistical parameters, constant experiment health checks, and guardrail metrics, ensuring that your results remain reliable as test volume increases.

2. AB Tasty

Uses a Bayesian stats engine and pairs it with AI-driven visitor segmentation, including segmentation based on emotional states. This is useful when you need to go deeper than surface-level results.

3. Optimizely

Supports both Bayesian and Frequentist models and adds Sequential Hypothesis testing to improve accuracy. Its strong segmentation capabilities let you slice results by audience without losing statistical confidence.

4. Convert

Gives you the flexibility to switch between Frequentist and Bayesian models depending on the test. Post-segmentation support lets you dig into results after the fact.

5. Statsig

Processes raw events, exposure events, and pre-computed metrics together. Its heterogeneous effect detection helps surface results that vary across user segments, which is a feature that most tools skip.

Conclusion

A/B testing is in itself a challenging endeavour. To derive actionable insights from it is a whole different ball game. How do you analyze your A/B test results? Do you base your new test hypothesis on past learning? Data-driven decision-making has to be at the heart of your experimentation projects. Powered by our sequentially valid stats engine, our experiment reports with customizable stats options and bias correction help you make reliable decisions in the shortest possible time.

Frequently asked questions (FAQs)

The success of an A/B testing program is directly tied to how much it improves key business metrics like revenue and lead generation. You can compare before and after data across a bi-annual or annual range to see how a series of experiments collectively moved the KPIs that matter.

Enterprise data analysts use A/B test results to understand how an optimization campaign performed for end users. Win or lose, the results feed the next iteration. That is what keeps the experimentation loop running.

A failed test is not a dead end. Analysts dig into user behavior, spot friction points, and build a new hypothesis. Each result, positive or negative, becomes the starting point for the next test.

Marketers start by checking whether a test reached statistical significance, typically with at least 1,000 users in the experiment. From there, they look at tracked metrics to measure conversion improvement. Behavior analysis of the variation adds the final layer, helping them understand why the numbers changed.