CRO is moving beyond website-level testing. Businesses are shifting toward experimentation-led decision-making, where the focus is on improving how business strategies are made, not just what gets tested.

That’s where CRO Perspectives plays a role, bringing together CRO and experimentation leaders to share how they think, operate, and evolve. Whether you’re starting out or moving toward more advanced use of CRO, the aim is to help you progress with clarity.

For our 22nd post, we spoke with Andres Pinate, Marketing Director and Strategic Consultant in CRO and Growth, based in Spain. He breaks down how decision design, consumer psychology, and structured experimentation come together to drive revenue systems.

Leader: Andres Pinate

Role: Marketing Director | Strategic Consultant in CRO & Growth

Location: Spain

Speaks about: Conversion Rate Optimization (CRO) • Decision Design • Consumer Psychology • Revenue Systems • Strategic Experimentation.

Why should you read this interview?

Andres Pinate views experimentation not as a growth trick, but as a strategic lens to illuminate how decisions are truly made.

With a career spanning industries such as consumer electronics, FMCG, mobility, and automotive, Andres has focused on bridging the gap between data-driven evidence and qualitative depth. He specializes in building “operating models” for experimentation that compound organizational intelligence over time.

His perspective moves beyond simple conversion tactics, focusing instead on how brands can win in a zero-click world through trust density and commercial logic. If you want to understand why the next competitive advantage won’t come from running more tests, but from making better decisions faster, Andres’s insights are essential.

B2B vs B2C experimentation: Key differences

The difference between B2B and B2C experimentation is not simply in volume or speed. It is in the nature of the decision itself. B2C often rewards immediacy, emotional clarity, and friction removal. B2B is governed by trust, consensus, and perceived risk. That changes the role of experimentation entirely.

In B2B, the best experiments do not just improve a metric; they illuminate how decisions are truly made. The strongest teams understand that experimentation is a strategic lens. In B2C, you are often testing for faster conversion, clearer messaging, and simpler paths to purchase. The feedback loop is shorter, and the emotional context is more immediate.

In B2B, the challenge is more layered because the buyer is rarely a single person, and the final decision is shaped by multiple stakeholders, internal politics, and a much higher tolerance for research. That means the experimentation framework must be more precise, more patient, and more connected to the actual commercial logic of the business.

The real opportunity in both sectors is not to test more, but to test better. Great experimentation is not about producing a long list of variations; it is about building a deeper understanding of why people choose, hesitate, or disengage. That is where strategy starts to separate itself from activity.

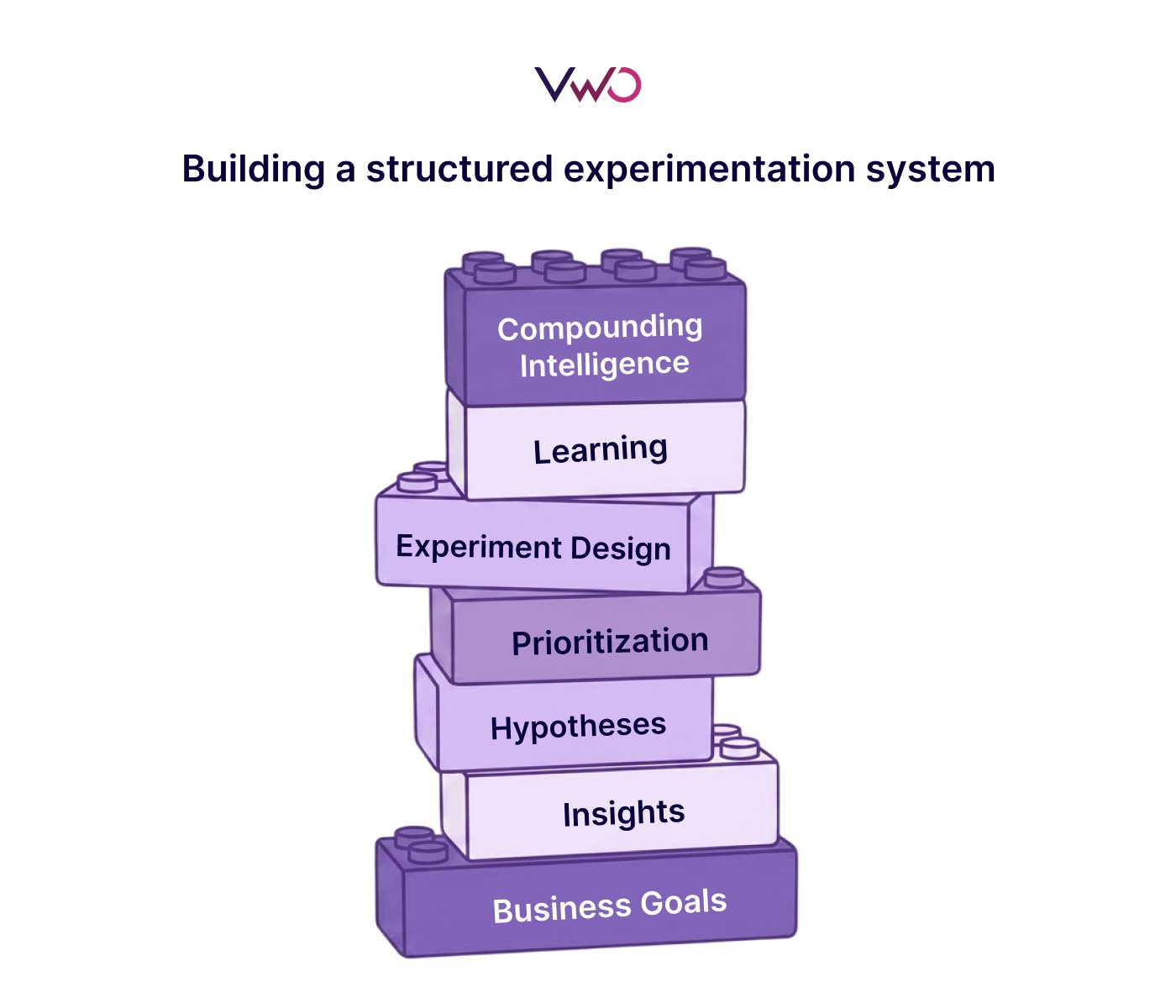

Building a structured experimentation system

A real experimentation system is not a collection of tests. It is an operating model. It requires a clear business thesis, disciplined hypothesis generation, ruthless prioritization, and a process that captures learning as an organizational asset. Without that, testing becomes performative and an activity without accumulation. The goal is not to run more experiments; the goal is to build a system that compounds intelligence over time.

When I think about building this from scratch, I think in layers. First, there has to be alignment on what the business is actually trying to improve: revenue, retention, acquisition efficiency, customer quality, or something else. Then comes the idea intake layer, where insights from analytics, UX, customer feedback, sales, and support are converted into hypotheses. After that, prioritization becomes critical because not all ideas deserve equal attention. A mature system creates discipline around what gets tested, why, and how success is defined.

What separates strong teams from busy teams is that they treat learning as a reusable asset. Every test should make the organization smarter, even when it fails. If an experimentation program does not improve the quality of future decisions, it is just noise wrapped in process.

How to prioritize tests across the funnel

Prioritization is where maturity becomes visible. I focus on the intersection of business impact, user friction, and strategic leverage, because the best experiments are rarely the most obvious ones. A strong framework should eliminate weak ideas quickly and protect the tests that can reshape understanding, not just move a number. If a test does not improve the quality of decision-making, it is probably not worth the energy.

In practice, prioritization should force teams to be honest about trade-offs. A test may be easy to launch, but if it does not have meaningful commercial or behavioral relevance, it can become a distraction. I like frameworks that blend impact, confidence, and effort, but I also think there is a more sophisticated layer: strategic timing. Some experiments matter because of what they could teach the business at a particular moment, not just because of the metric they might move.

The best prioritization processes create focus and alignment. They help teams understand that experimentation is not a creative playground; it is a decision-making discipline. When prioritization is done well, it becomes a way of protecting attention, capital, and momentum.

Aligning teams around revenue-driven KPIs

The shift from marketing metrics to revenue ownership changes the standard entirely. At that point, teams are no longer being evaluated on activity or visibility, but on contribution and accountability. That requires a unified view of acquisition, conversion, retention, and expansion, all measured through the lens of business outcomes rather than departmental convenience. Revenue is not owned by a team; it is built by a system.

This shift also changes the language inside the organization. Marketing stops being seen as a support function and becomes part of the economic engine of the company. That means the team cannot hide behind clicks, impressions, or superficial engagement. It has to connect its work to commercial outcomes, and it has to do that in a way that the rest of the organization can understand and trust.

To align teams around the right KPIs, the business has to define a shared chain of causality. What drives acquisition quality? What improves activation? What reduces churn? What expands lifetime value? Once teams understand that these are not isolated questions, but part of one connected revenue system, the conversation becomes much more mature. That is where performance stops being tactical and starts becoming strategic.

Turning behavioral data into actionable tests

Most organizations already know what users are doing. Far fewer understand what that behavior means in context. That is the real opportunity. The most effective teams combine quantitative evidence with qualitative depth and then translate both into decisions that are simple, testable, and commercially relevant. In a world flooded with data, judgment becomes the differentiator.

This is one of the biggest blind spots in digital marketing and product optimization today. Companies often invest heavily in dashboards, reporting layers, and behavioral tools, but still struggle to turn observation into action.

A heatmap can show you attention patterns, and a funnel can show you drop-off, but neither tells you what the user was feeling, expecting, or trying to solve in that moment. That requires interpretation, and interpretation is a human skill.

You need the numbers, but you also need the story behind the numbers. When you bring both together, the organization stops reacting to symptoms and starts addressing causes. That is where real progress begins.

Pair behavioral data with customer insights using VWO Pulse. Capture the real-time voice of customers through contextual surveys to understand what users are thinking and expecting. This brings context to the data and strengthens the quality of decisions you make.

Zero-click behavior: Impact on user decisions

Zero-click marketing has fundamentally altered the user’s arrival point. People now reach a website carrying pre-formed context, partial answers, and sharper expectations. That means the website is no longer just a place to inform; it is a place to clarify, reassure, and differentiate. In this environment, brands do not win by being clearer, more useful, and more credible than the summary that brought the user there.

This changes the role of content, UX, and persuasion. The user is less interested in being educated from zero and more interested in validating a direction they already suspect may be right. So the brand has to answer a different question: not “what is this?” but “why should I trust this, choose this, or act now?” That is a much more demanding commercial challenge.

In a zero-click world, the experience itself becomes part of the value proposition. If the user has already absorbed the basic answer elsewhere, the website must offer something deeper: nuance, confidence, context, proof, and a sense of conviction. The companies that adapt will stop thinking in terms of traffic alone and start thinking in terms of trust density.

When personalization adds value or complexity

Personalization creates value when it reduces effort and increases relevance without drawing attention to itself. The best personalization feels natural, not engineered. But when it becomes excessive, it starts to erode clarity and trust. The line is simple: if personalization helps the user move forward with more confidence, it adds value. If it adds complexity in the name of sophistication, it becomes self-defeating.

The mistake many brands make is believing that personalization is automatically good because it is more “advanced.” In reality, personalization only works when it is rooted in a real understanding of user context and business priority. It should help the user navigate choices, not overwhelm them with endless variations, dynamic modules, or irrelevant automation. The best personalized experiences often feel elegant because they are restrained.

Personalization should not be used to mask weak product-market fit or poor messaging. It should sharpen an already valuable experience, not compensate for a broken one. When used well, it is a precision tool. When used poorly, it becomes decorative complexity.

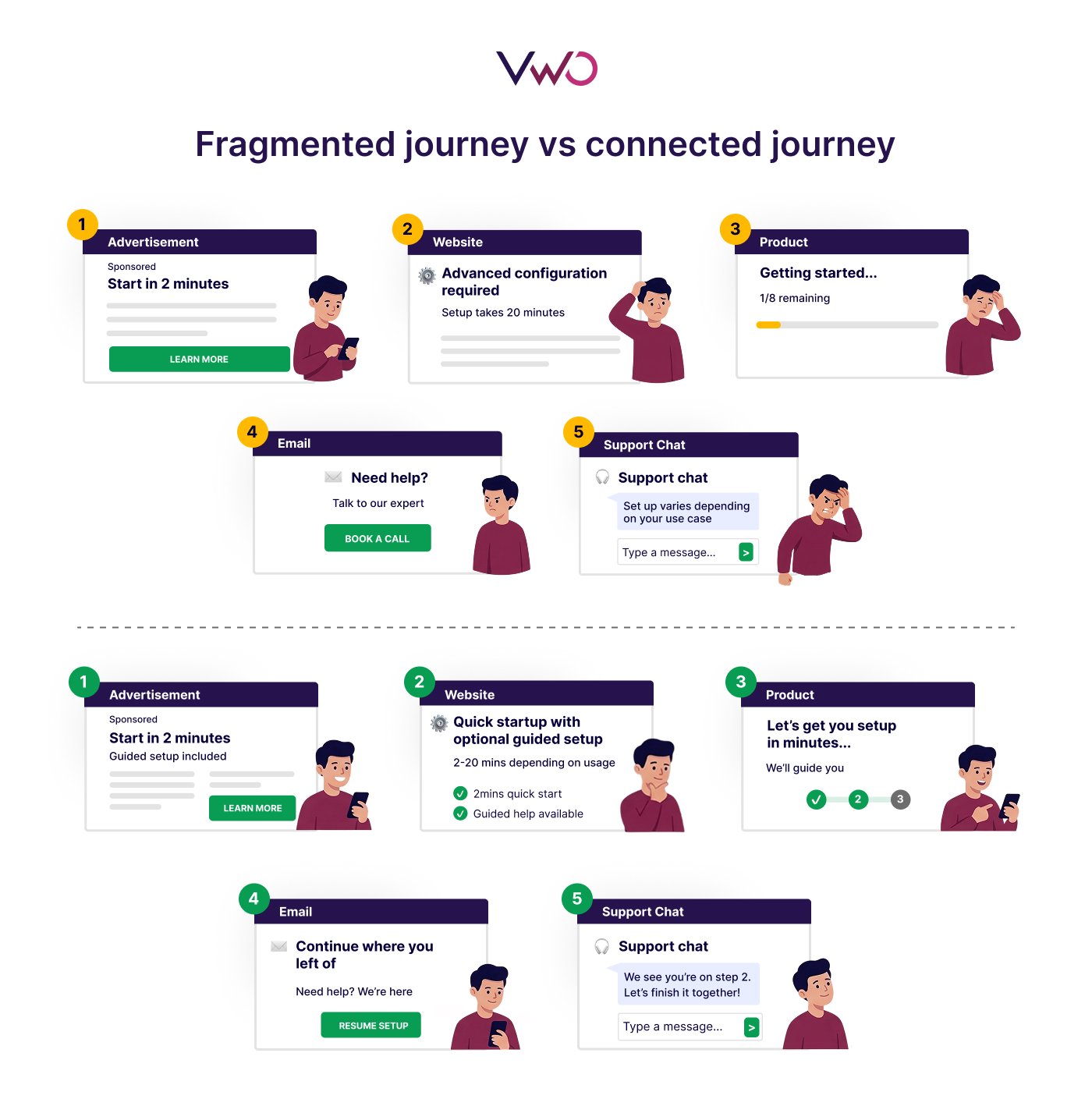

Connecting fragmented customer journeys

A fragmented journey is almost always a symptom of fragmented thinking. Users do not experience channels; they experience continuity or interruption. To create coherence, brands need alignment across message, timing, tone, and intent, not just better data integration. The hardest part is rarely technical. It is organizational. The companies that struggle most are the ones optimizing touchpoints without designing the journey as a single, connected experience.

This is where many teams underestimate the challenge. They assume omnichannel consistency is mainly a platform or CRM issue, when in reality it is a leadership issue. If marketing says one thing, product says another, and customer service delivers a third experience, the user feels that disconnect immediately. They may not be able to articulate it in business terms, but they feel the inconsistency.

Brands need to understand that omnichannel consistency is coherence built through shared intent. The journey has to feel like it belongs to one mind, one narrative, and one promise. That does not mean every touchpoint is identical; it means every touchpoint is connected. And in a market where trust is fragile, connection is a competitive advantage.

AI in experimentation: Impact and guardrails

AI is already delivering meaningful value in marketing and analytics, but its true power lies in amplifying high-quality human thinking, not replacing it. Its strongest use cases are in pattern recognition, speed of analysis, content variation, and hypothesis support. But scale without judgment is dangerous. The essential guardrails are human oversight, source traceability, and strategic accountability. AI should raise the standard of thinking, not lower the threshold for decisions.

What excites me most is not automation for its own sake, but the possibility of making teams more intelligent, more responsive, and more ambitious. AI can help surface patterns faster, reduce manual work, and widen the range of hypotheses a team can explore. That is valuable. But it cannot replace experience, intuition, or the ability to understand nuance in context. Those are still human advantages.

The teams that will win with AI are not the ones using it blindly. They are the ones building clear rules around when to trust it, when to challenge it, and when to stop and think. In other words, the future belongs to organizations that know how to combine machine scale with human judgment.

CRO in Spain: Current landscape and future trends

Spain is moving into a more mature phase of CRO and experimentation, and that evolution is overdue. The conversation is gradually shifting from tactics to decision design, which is exactly where the discipline becomes powerful. What many companies still underestimate is that CRO is not a toolset; it is a cultural capability.

The strongest progress is happening where UX, analytics, and business strategy are finally being treated as one conversation. The next advantage in the Spanish market will not come from doing more experiments. It will come from making better decisions faster.

There is still room for improvement in how many organizations think about experimentation. Too often, it is treated as a project instead of a capability, or as a conversion tactic instead of a strategic system. The companies that are moving ahead are the ones understanding that CRO is not just about lifting conversion rates; it is about reducing uncertainty. That is a much more valuable role inside a business.

At the same time, I do think the market is becoming more sophisticated. There is more openness to data, more awareness of UX, and more recognition that customer understanding is a competitive asset. The next phase will belong to companies that combine rigor with creativity, and technology with real human insight.

Conclusion

We hope you found valuable takeaways from this conversation and a clearer perspective on what it takes to build a strong experimentation system.

With VWO, teams can bring these ideas into practice by connecting insights, experimentation, and learning into a single system.

You can explore how this works with a quick demo.