Alex’s team finally got approval for their first A/B test.

After months of pitching the value of experimentation, leadership was on board.

Now came the hard part: choosing what to test first.

Everyone agreed that it should be something big. Something transformative. Something that would prove experimentation’s value once and for all.

Three months later, the “first test” was in design reviews. Six months later, it was still in development.

A year later, the momentum was gone, and the experimentation program never really took off.

Lucia van den Brink has watched this story repeat itself across organizations.

As founder of The Initial, she’s witnessed how the “big test trap” kills more CRO initiatives than any other single mistake.

Recently on the VWO Podcast, Lucia shared how teams can balance ambition with the practicality needed to actually ship tests and generate learnings.

Lucia’s 4-step framework for building sustainable experimentation programs

This framework addresses how experimentation is about building the right habits, systems, and portfolio balance from day one.

Step 1: Recognize the big test trap (and why it happens)

When companies first commit to experimentation, they almost universally start with a massive idea.

This pattern emerges for understandable reasons.

Big projects naturally attract leadership attention, and there’s an implicit belief that if the change is big, the impact must be proportional.

But this logic breaks down in practice. Large-scale tests take months to design, build, and launch.

Recently, I spoke to a product owner for experimentation, and their first test was a fraud detection system. That’s a pretty huge thing for a first test. In another case, a client started experimenting with a full redesign of their new page. This happens because big projects are more likely to get leadership buy-in or development capacity.

They require extensive coordination across teams and often involve so many changes that isolating what actually drove results becomes nearly impossible.

Most importantly, they delay the habit-building part, which is exactly what you need to build sustainable experimentation programs.

Step 2: Focus on small tests that drive a huge impact

Modest changes often produce substantial results, sometimes more substantial than major overhauls.

Small tests offer multiple advantages beyond just speed, and they’re also less risky because they don’t fundamentally alter core experiences.

Moreover, these small changes also create learning opportunities that can inform larger strategic initiatives.

Looking for inspiration? Check out this free eBook to explore real experimentation stories from brands that focused on small, thoughtful changes to drive big results.

Example in practice

Suppose a B2B-SaaS team is trying to establish an experimentation program. Instead of jumping straight into testing, they first build the supporting infrastructure.

- They set up simple Kanban board tracking experiments through stages.

- They schedule bi-weekly 30-minute “test review” meetings where teams share results and discuss learnings.

- They commit to running at least three tests per month for the next quarter, regardless of individual test outcomes.

After three months, this system produces unexpected benefits.

New team members naturally ask, “Should we test this?” when proposing changes.

Also, the team eventually moves to test smaller, faster ideas rather than waiting for the “perfect” hypothesis.

6 months later, they’ve run 20 tests, learned which types of experiments work best for their product, and built relationships across teams that make testing more efficient.

Create full-fledged testing campaigns within minutes! Just prompt VWO Copilot in natural language, and the AI-powered assistant sets up everything for you, right from creating variations, setting up metrics to track, and even choosing the relevant target audience.

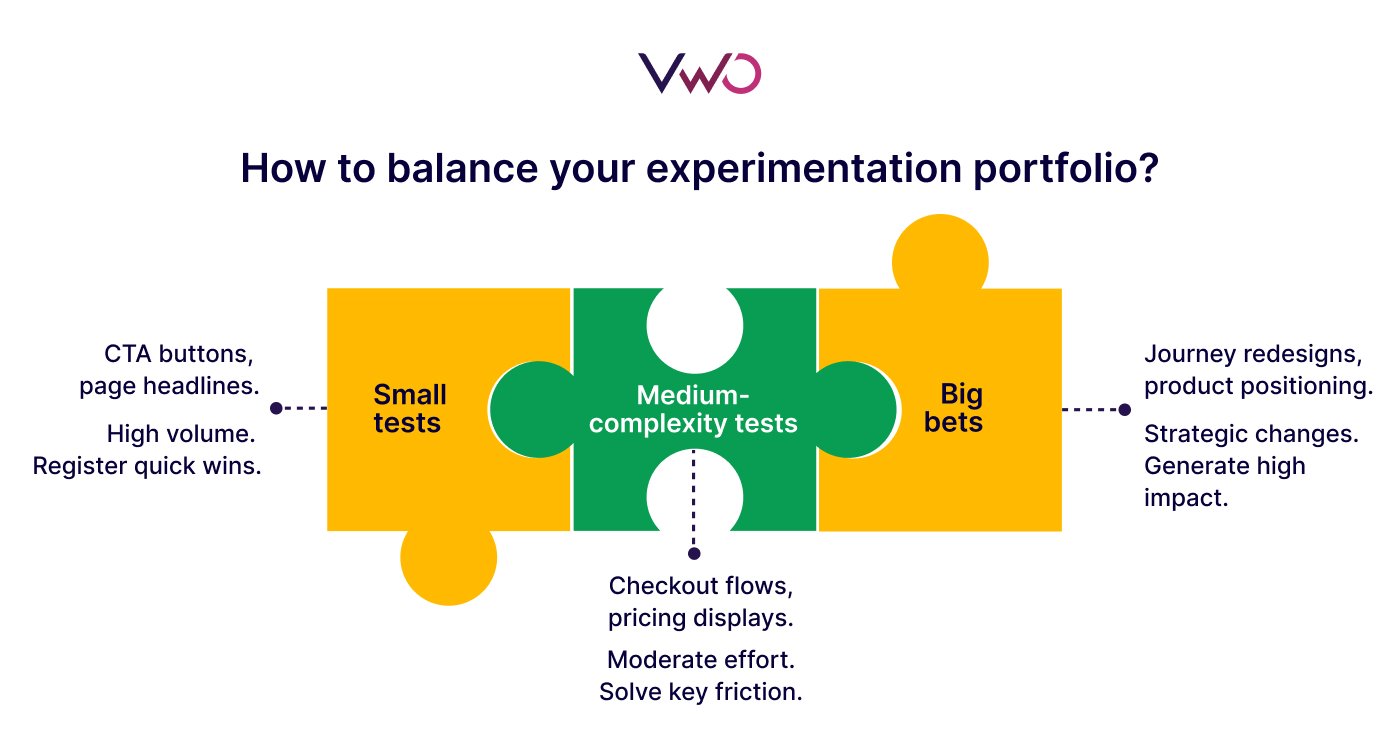

Step 3: Balance your test portfolio strategically

Once teams establish testing momentum, the next challenge is deciding what kinds of experiments to run.

- Small tests can offer quick wins and help you build proof for stakeholders. (e.g., testing CTA button copy, urgency messaging, or trust badge placement)

- Medium-complexity tests address significant barriers without requiring months of development. (e.g., simplifying checkout flows, testing new pricing displays, or restructuring product pages)

- Big bets tackle questions or challenges that could reshape your business model. (e.g., complete journey redesigns, new product positioning, or fundamental feature changes)

This ensures that decisions about your experimentation portfolio are intentional and explicit, rather than something that happens on its own.

An experimentation program is all of that, but in a balanced way. So all of those really big things, all the small things, and just trying to learn as much as possible in the smartest way.

Step 4: Use big tests to validate, not explore

Large-scale experiments remain important for major strategic decisions, such as redesigning core experiences, changing pricing models, or introducing new product flows.

But teams should only attempt them after accumulating meaningful insights from smaller tests and gaining confidence about the direction.

The big tests can validate what smaller tests have already suggested and compound previous wins rather than exploring completely unknown territory.

For example, suppose you run a few small tests on your home page:

“Track your expenses easily” (feature-focused) vs. “Save more money every month” (benefit-focused).

The results indicate that users respond better to benefit-driven messaging, which is a crucial learning that can be carried into the next redesign.

Here, you can then test other new elements like visual hierarchy, social proof, or navigation to further improve conversions.

This approach reduces the risk associated with large-scale experiments as you’re now making informed changes backed by behavioral evidence from real users.

Lucia emphasizes this approach:

I’m always an advocate for starting small. Get evidence for the case of experimentation, and why it’s good. Find an A/B test that works, another A/B test where you learn from, then slowly get more people on board, and get evidence for the case of working data-driven.

Building your own sustainable experimentation program

Lucia’s framework demonstrates that successful experimentation is built through consistent habits, balanced portfolios, and systems that make testing feel natural rather than exceptional

The companies with mature experimentation programs didn’t get there through occasional big swings. They got there by:

- Making experimentation a daily practice.

- Treating small optimizations as valuable as major initiatives.

- Building the organizational capability to test continuously.

If you’re ready to bring this framework to action, you need a platform that enables you to collaborate seamlessly across teams and manage your entire optimization program in one place.

With VWO, teams can record all insights or notes related to their optimization efforts, manage and prioritize experimentation ideas, and track how each experiment impacts key metrics.

Schedule a demo now to explore these capabilities first-hand.