What We Learned After Gen AI-based NPS Went Mainstream

Generative AI has changed NPS surveys by automating the setup, analysis, and follow-up, which used to be done manually. Businesses can now scale feedback collection without needing to expand their teams. This AI-powered next best experience approach boosted customer satisfaction by 15 to 20 percent.

But here’s the thing.

A year into widespread Gen AI-based NPS adoption, we’re seeing patterns. Some capabilities delivered on the promise, while others created new challenges nobody anticipated. Plus, a few “innovations” need more careful consideration than they’re getting.

What Generative AI brought to the table

The improvements showed up across timing, design, and analysis. Each solved a different friction point in traditional NPS programs.

a. Right moment NPS deployment powered by behavioral signals

Traditional NPS surveys run on fixed schedules. Send after 30 days, 60 days, 90 days. The problem is that some customers would have already forgotten key details.

Gen AI changed the trigger logic.

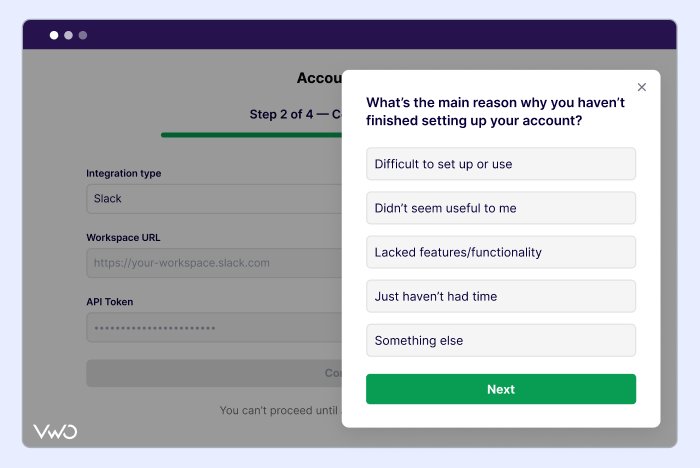

It watches for behavioral signals that indicate genuine experience. It triggers a survey based on specific actions, such as when a customer closes a support ticket, drops off after signing up, or adopts a new feature. The survey is fired when the experience is fresh, and the feedback is actually informed.

b. Smarter NPS survey creation with dynamic question paths

Traditional NPS surveys asked everyone the same follow-up questions. A promoter and a detractor both got “Why did you give us this score?”.

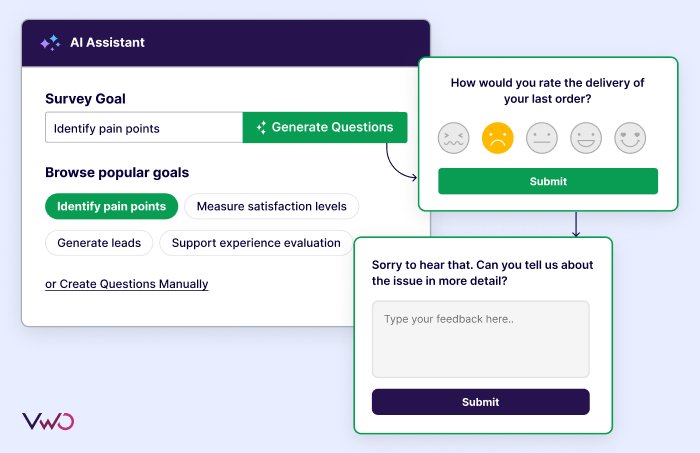

With Gen AI, you set a goal like understanding why detractors are unhappy or what drives promoters to recommend you, and AI generates relevant follow-up questions. If it misses the mark, regenerate new options or edit specific questions yourself.

Now, the survey adapts in real-time. An unhappy user is asked questions about specific pain points, while a passive user is asked what’s holding them back.

Teams also started testing surveys with synthetic responses generated from AI customer personas built on real customer data. These personas emulate human behavior and decision making, letting teams validate question quality and flow logic before deployment.

c. Automated analysis and intelligent feedback routing

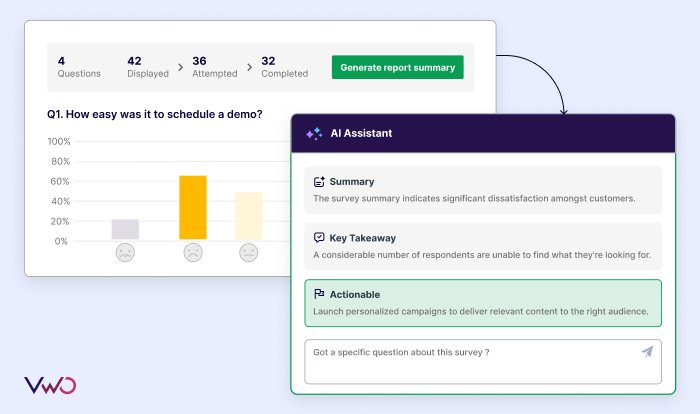

AI-powered reports surface key insights automatically. Teams receive summaries of what matters, view patterns across open-ended feedback, and derive actionable suggestions tied directly to the data.

Gen AI doesn’t just analyze NPS responses; it decides who needs to see what and how urgently. For example, if a user mentions problems in the checkout process, AI routes it to engineering and tags it as urgent.

Teams no longer spend hours sifting through spreadsheets. The right feedback reaches the right people without manual sorting, and critical issues get attention before they become churn risks.

The system also ranks by business impact, like detractors on annual contracts get flagged differently from monthly subscribers.

These improvements are real. But the first year of Gen AI-based NPS also revealed gaps that surfaced only with real-world use.

The gaps in Gen AI-based NPS

Widespread adoption of Gen AI-based NPS exposed limitations that only became clear when teams moved beyond proof of concepts into daily operations.

a. Synthetic testing misses what real customers share

Synthetic testing did speed up survey validation. AI personas built from customer data could emulate behavior patterns and test question flows faster than real responses.

But here’s the problem. These personas only capture what happened on your website in the past. A real customer has financial stakes, and they’re proving ROI to their organization. They’re making decisions based on current business pressures, market shifts, and internal politics.

Gen AI predicts behavior from historical data. It can’t replicate the complexity of someone with actual consequences riding on their choices. A synthetic persona might predict positive sentiment for a user completing your onboarding flow. But a real customer rushing to hit a launch deadline might score you low because your setup took two days when they needed it done in two hours.

The synthetic user has no deadline, while the real one does. That pressure changes everything about how they evaluate you. Teams learned this gap the hard way once they compared synthetic insights to real feedback.

b. AI surveys losing their distinctiveness

AI trained on similar datasets produces similar design patterns. Question phrasing, visual layouts, and follow-up logic converge toward what maximizes responses, not what captures genuine sentiment. Research shows AI tools can limit originality by relying heavily on training data patterns, creating design fixation across outputs.

High completion rates can mask shallow feedback when questions are optimized for clicks rather than depth. This optimization creates surveys that look professional but fail to capture the nuance that makes NPS feedback actionable.

These gaps show up across companies using Gen AI for NPS at scale. Moving ahead means finding the right combo of automation and human oversight.

The smarter path forward

a. Teams should interpret insights and prioritize what to fix

Gen AI surfaces patterns, but team members managing surveys must analyze these patterns before making decisions and not rely solely on Gen AI suggestions for the next step.

Research shows LLMs surface correlations but lack genuine causal reasoning. They don’t understand your business model, competitive positioning, or resource constraints. Use AI to process feedback at scale, but own the interpretation and decisions yourself.

For example, imagine you’re a product manager and your AI flags complaints about the mobile app and routes them to engineering as a high priority. However, you just launched a redesign two weeks ago, and users are still adjusting to the new navigation.

The complaints could indicate real issues that need addressing or simply be temporary adjustment noise. As a product manager, you need to inspect the details, talk to users, and decide whether to tweak the design or allow more time for users to settle in.

b. Be transparent about Gen AI’s role in your NPS process

Document what your Gen AI handles automatically versus what requires human review. Make it visible which feedback gets AI-routed, which insights are AI-generated, and where humans intervene.

Create simple documentation that shows the workflow:

- AI categorizes sentiment and routes urgent issues.

- Product managers review all routing for enterprise accounts.

- Customer success manually responds to detractor scores below 3.

Documented workflows reduce confusion and help stakeholders make better decisions about when to rely on AI and when to question it.

c. Validate NPS feedback through experimentation

Gen AI surfaces patterns in your NPS feedback, but insights without action are just noise. For example,

- Audit AI regularly to ensure insights are accurate and not just fast – test categorization accuracy against human judgment, validate routing logic, and run monthly checks to catch drift early.

- When you spot issues like checkout friction, run an A/B test with a simplified flow and measure if NPS scores improve.

- If you see promoters praising a specific feature, create a personalized experience that highlights it for similar users.

Testing validates whether fixing the issue actually moves satisfaction scores. Also, it confirms if your Gen AI is accurately identifying real problems or flagging noise. When tests show improvement, you know the AI is surfacing the right patterns.

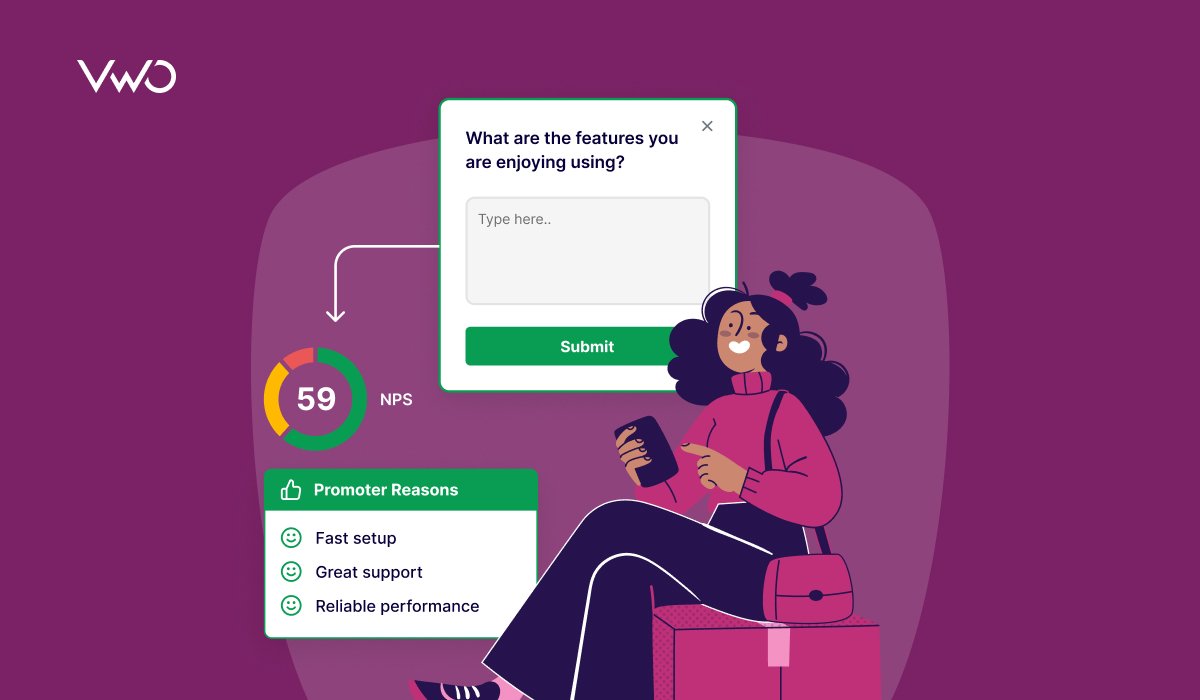

Deploying NPS surveys with VWO Pulse

The smarter path requires the right tools. You need Gen AI speed for pattern detection, human control over priorities, and testing to validate what works. VWO Pulse brings all three together in one platform.

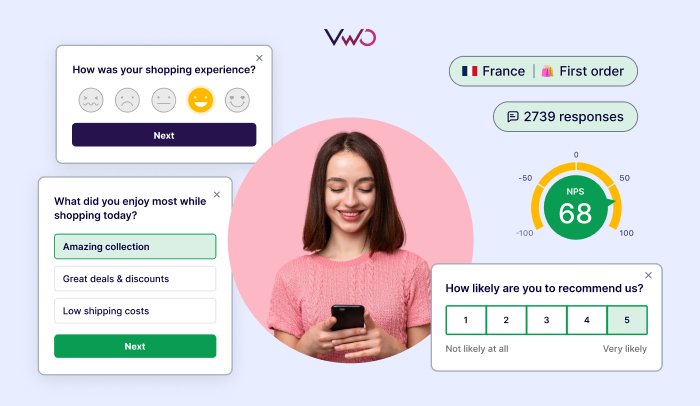

VWO Pulse is a voice of the customer product that captures in-the-moment, contextual feedback across your digital channels- website, mobile app, in-product, and email surveys, all fed into one place. There is no scattered feedback across tools, and you get a complete view of customer sentiment wherever they interact with you.

Pulse also uses AI Copilot to automatically generate survey questions based on your goals. You can set what you want to understand about your users, and AI generates relevant questions. If the questions need refinement, you can regenerate or edit them yourself. Once your survey receives responses, the AI copilot provides key insights and actionable suggestions automatically.

Most importantly, VWO Pulse connects directly to VWO’s testing and personalization products. For example, if VWO Copilot (Gen AI feature) flags that users are dropping off at checkout. You can investigate the context and decide steps to address it. You can create an A/B test or a variant based on user feedback and launch it to users. You can request a free demo today to see how it suits your business needs.